Conclusion

Instructor Tatsu from Stanford’s CS336 (LLM Training course) recently did something extraordinarily information-dense: he took apart every mainstream LLM from the past 3 years and compared their architectural choices one by one.

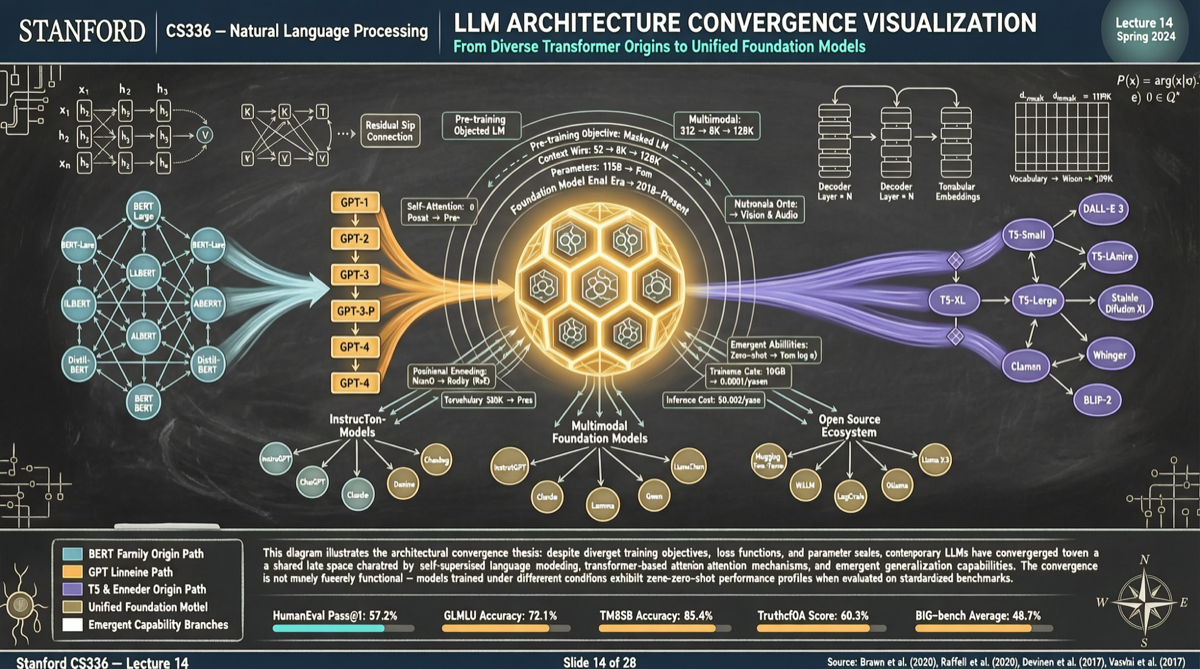

The conclusion was quite explosive: 90% of architectural choices have already converged. Pick any open-source large model at random — whether it’s Qwen, Llama, DeepSeek, or GLM — and they are nearly identical across these dimensions.

The instructor’s three-sentence summary of the past three years:

- 2024: Everyone was cosplaying Llama 2

- 2025: The theme was “how to train without collapsing”

- 2026: ?

Where Architecture Convergence Shows Up

Tatsu’s course dissected the following core dimensions and found that nearly all mainstream models chose the same solutions:

1. Transformer Variants

Almost universally Decoder-only architecture. Encoder-decoder (T5 family) has been completely marginalized in the general LLM space. MoE (Mixture of Experts) has shifted from “optional” to the “default configuration for large models.”

2. Attention Mechanism

The industry-wide migration from Multi-Head Attention to Grouped Query Attention (GQA) happened almost synchronously. GQA’s advantages in inference speed and VRAM usage made it win without contest.

3. Normalization Layers

RMSNorm replaced LayerNorm as the standard, and Pre-Norm architecture’s demonstrated stability in deep training left it virtually unquestioned.

4. Activation Functions

SwiGLU dominates. ReLU and GeLU have essentially disappeared from new models.

5. Position Encoding

RoPE (Rotary Position Embedding) is the de facto standard for scenarios requiring long context windows. ALiBi still has a place in specific scenarios (such as streaming inference).

Why Convergence Happened in 2024-2025

This is no coincidence. Behind the architectural convergence lies the convergence of three forces:

Compute costs: Training a 70B+ model costs millions of dollars, leaving virtually no room for trial and error. Once Llama 2 validated a set of architectural choices across the 7B-70B range, successors had almost no incentive to overturn and start fresh.

Open-source transparency: The open-sourcing of the Llama series made all architectural details transparent. Later model teams didn’t need to “rediscover” — they could reference directly.

Theoretical support: Research on Scaling Laws has matured significantly, giving the community a clearer understanding of “which designs work at scale.”

What Is 2026 About?

If architectures have converged, where has the competition shifted?

Data quality and training stability.

The instructor hinted that the core competitive dimensions of 2026 are shifting toward:

- Data ratio optimization: The optimal mixture ratios of code, math, multilingual, and instruction data

- Training process stability: How to avoid loss spikes and gradient explosions

- Post-training methods: The efficiency and quality of alignment methods like RLHF, DPO, and ORPO

This also explains why Chinese models like Qwen and DeepSeek can still achieve significant performance differentiation despite architectural convergence — through data strategy and training craftsmanship.

What This Means for Practitioners

If you’re doing any of the following, this information matters:

- Model selection: Don’t be fooled by marketing speak about “unique architectures.” The real differences lie in data and post-training

- Local deployment: Since architectures are converging, optimization experience from one model (like quantization schemes, inference frameworks) can transfer to others

- Research entry points: If architectural innovation space is shrinking, the next breakthrough is more likely to come from the data side or training methodology

Where Chinese Models Stand in This Convergence Landscape

A noteworthy detail: Chinese models (Qwen, DeepSeek, GLM) haven’t just kept up with the architecture convergence trend — they’ve also created differentiation on certain dimensions:

- Qwen’s sustained investment in multilingual capabilities and long context windows

- DeepSeek’s aggressive strategy in MoE architecture and inference cost optimization

- GLM’s advantages in Chinese language understanding and localized knowledge

Architectural convergence doesn’t mean capability convergence — data and training craftsmanship are the true watershed.

One Line

LLM architecture convergence isn’t the end of innovation — it’s a shift in competitive dimensions. The 2026 model war is about data, training craftsmanship, and alignment quality — and these are precisely the areas where Chinese models are investing heavily.