Conclusion

Alibaba’s Tongyi Qianwen team has officially open-sourced Qwen-Scope, a model internal representation analysis and control toolkit based on Sparse Autoencoders (SAE). The tool covers 7 models across the Qwen3 and Qwen3.5 families, with its core value being: you can directionally control the model’s output behavior by manipulating internal features, without fine-tuning the model.

This is not an ordinary open-source toy — it represents the first systematic engineering and adaptation of frontier mechanistic interpretability research (from Anthropic and other institutions) into the Chinese LLM ecosystem.

Core Capabilities Breakdown

| Capability Dimension | Specific Function | Practical Value |

|---|---|---|

| Feature Localization | Locating specific neurons/features inside the model | Understanding “why” the model produces a certain output |

| Output Control | Intervening in feature activation during inference | Adjusting model behavior tendencies without training |

| Classifier Construction | Training feature classifiers with few seed examples | Low-cost detection of specific concepts or intents |

| Sample Synthesis | Generating long-tail samples based on feature activation | Expanding training data for rare scenarios |

| Anomaly Detection | Locating features causing anomalous outputs | Rapid diagnosis of model “bad habits” |

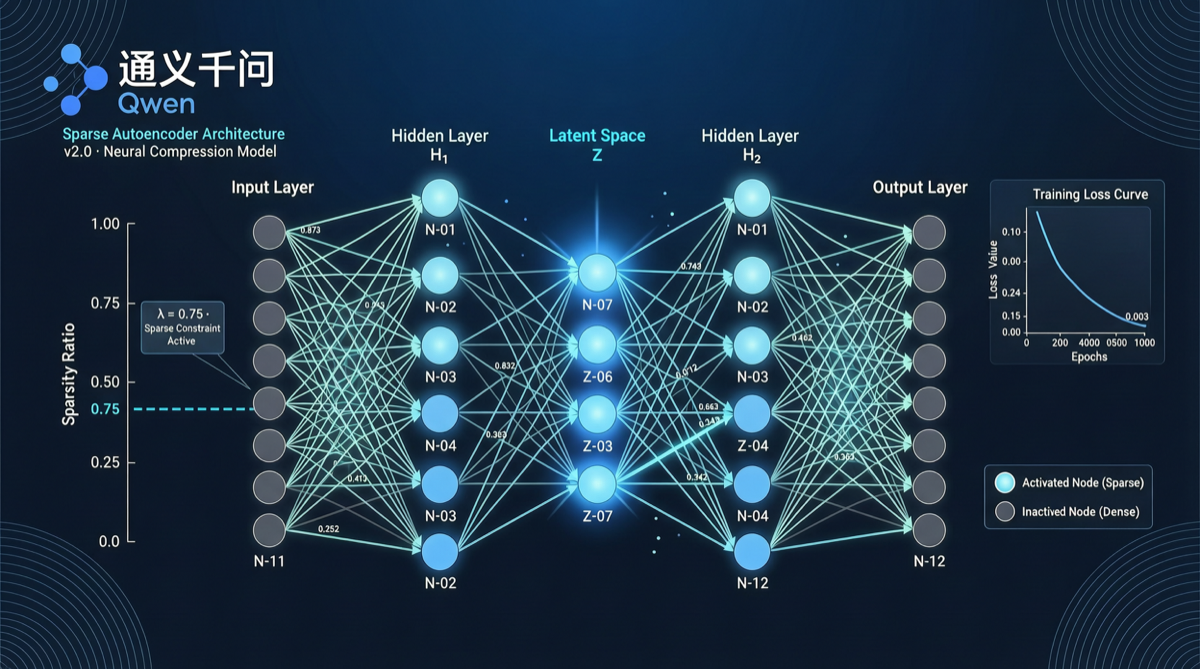

Technical Principle (Brief)

Qwen-Scope’s workflow has three steps:

- Train SAE: Train sparse autoencoders on model hidden layers (typically MLP or Attention outputs), decomposing high-dimensional dense activations into numerous sparse “features”

- Feature Annotation: Automatically or semi-automatically label semantic meanings for each feature (e.g., “Chinese language feature”, “code feature”, “safety refusal feature”)

- Feature Intervention: Enhance or suppress specific features during inference to achieve precise output control

The elegance of this approach: you don’t need to retrain the model — just “turn a few knobs” during inference.

Covered Models

Qwen-Scope supports the following 7 models:

- Qwen3-0.6B / 1.7B / 4B / 8B

- Qwen3.5-4B / 8B / 14B

Covering all mainstream specifications from small to medium, adapted for different deployment scenarios.

Practical Application Scenarios

Scenario 1: Eliminating Language Mixing

When the model unnaturally mixes English into Chinese responses, locate the “English feature” and moderately suppress it during inference — the output becomes purer Chinese.

Scenario 2: Reducing Repetitive Generation

When the model produces repetitive output, locate and suppress the features corresponding to repetition patterns, significantly improving generation quality.

Scenario 3: Safety Alignment Enhancement

Without redoing RLHF, simply increase the activation strength of “safety refusal features” to make the model more sensitive to harmful requests.

Scenario 4: Domain-Specific Knowledge Injection

Locate key features of the target domain and enhance their activation during inference — effectively giving the model “temporary tutoring.”

Landscape Assessment

The open-sourcing of Qwen-Scope releases several important signals:

- Interpretability tools moving from research to engineering: SAE is no longer just a paper concept — it’s a downloadable, installable, usable toolkit

- Chinese model interpretability ecosystem launched: Previously, SAE tools mainly targeted English models (Claude, GPT). Qwen-Scope fills this gap for Chinese LLMs

- Fine-tuning costs can drop significantly: Feature control as a fine-tuning alternative can save substantial compute and time in certain scenarios

Compared with Anthropic’s SAE research, Qwen-Scope’s unique advantage lies in optimization for Chinese language characteristics, including Chinese tokenization features, Chinese-English mixing detection — things English model tools cannot cover.

Action Recommendations

- Model developers: Use Qwen-Scope to diagnose specific model behavior issues — more efficient than blind parameter tuning

- Application teams: When facing model output quality problems, try feature control first — you may not need to re-fine-tune

- Researchers: Build new benchmarks for Chinese LLM interpretability based on Qwen-Scope

Getting Started

# Clone repository

git clone https://github.com/QwenLM/Qwen-Scope.git

cd Qwen-Scope

# Install dependencies

pip install -r requirements.txt

# Load pre-trained SAE (Qwen3-8B example)

from qwen_scope import SAELoader

sae = SAELoader.from_pretrained("Qwen3-8B-MLP-SAE")

# Feature intervention during inference

controlled_output = sae.generate(

prompt="Your question",

feature_modulations={"chinese_purity": 1.5, "english_mixed": -0.8}

)SAE weights are published on Hugging Face and ModelScope, supporting direct loading and use.

Data Sources

- GitHub: github.com/QwenLM/Qwen-Scope

- Qwen Official Announcement