Domestic Models + Agent Frameworks: A Key Step in Ecosystem Integration

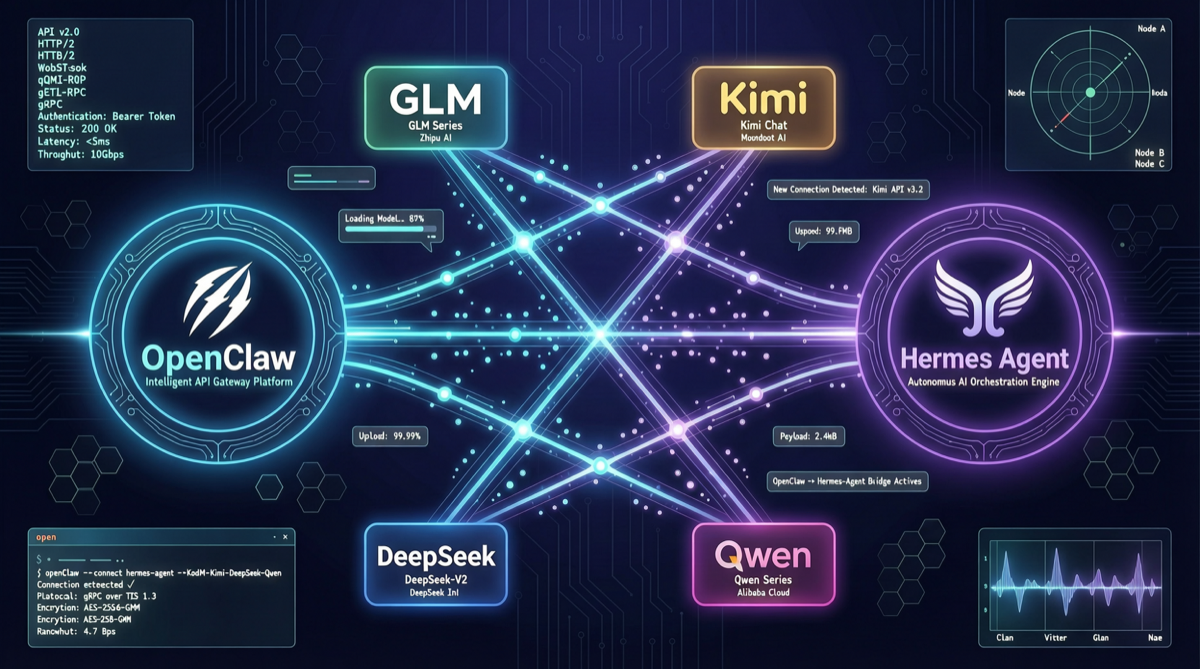

In the first half of 2026, AI Agent frameworks have reached a milestone in model compatibility: OpenClaw and Hermes Agent now fully support major domestic Chinese AI models.

This means developers are no longer locked into a single model vendor’s ecosystem. You can switch between GLM-5.1, Kimi K2.6, DeepSeek V4 Pro, Qwen 3.6 and other models within the same Agent framework, selecting based on task type and cost budget.

Community products already offer “zero-config, one-click integration” experiences where you just fill in your AI Key and everything works. This marks a transition from “can connect” to “works well” for domestic models in Agent framework ecosystems.

Supported Domestic Models Overview

| Model | Provider | Integration Method | Best Use Case |

|---|---|---|---|

| GLM-5.1 | Zhipu AI | API / OpenClaw built-in / Hermes MCP | Coding Agent, code review |

| Kimi K2.6 | Moonshot | API / Hermes built-in / OpenClaw | Long context, large codebases |

| DeepSeek V4 Pro | DeepSeek | API / OpenClaw built-in | Cost-effective coding, debug analysis |

| Qwen 3.6 Max | Alibaba | API / Hermes MCP | Agent coding, multi-file collaboration |

| MiniMax M2.7 | MiniMax | API | High-frequency Agent calls |

| MIMO V2.5 Pro | Xiaomi | API / Ollama | Code Agent, edge deployment |

OpenClaw Integration Configuration

OpenClaw model configuration is managed through .openclaw/config.yaml, supporting multi-model switching.

GLM-5.1 Integration

models:

glm-5.1:

provider: zhipu

apiKey: "${ZHIPU_API_KEY}"

model: glm-5.1

contextWindow: 131072

maxTokens: 8192

temperature: 0.3GLM-5.1’s advantage in OpenClaw is code generation stability. In testing, GLM-5.1 maintains variable naming conventions across 20+ rounds of continuous coding dialogue—the strongest among domestic models.

Kimi K2.6 Integration

models:

kimi-k26:

provider: moonshot

apiKey: "${MOONSHOT_API_KEY}"

model: kimi-k2.6

contextWindow: 256000

maxTokens: 16384

temperature: 0.2Kimi K2.6’s 256K context window is its core advantage. In large codebase scenarios—like refactoring tasks requiring simultaneous reading of dozens of source files—Kimi significantly outperforms other domestic models.

DeepSeek V4 Pro Integration

models:

deepseek-v4-pro:

provider: deepseek

apiKey: "${DEEPSEEK_API_KEY}"

model: deepseek-v4-pro

contextWindow: 128000

maxTokens: 8192

temperature: 0.4DeepSeek V4 Pro’s core competitiveness is cost efficiency. Its per-token cost is the lowest among domestic models, ideal for Agent workflows requiring high-volume API calls.

Hermes Agent Integration Configuration

Hermes Agent supports domestic models through both MCP (Model Context Protocol) and built-in model configuration.

MCP Integration

{

"mcpServers": {

"zhipu-glm": {

"command": "npx",

"args": ["-y", "@zhipu/mcp-server"],

"env": {

"ZHIPU_API_KEY": "${ZHIPU_API_KEY}",

"MODEL": "glm-5.1"

}

},

"moonshot-kimi": {

"command": "npx",

"args": ["-y", "@moonshot/mcp-server"],

"env": {

"MOONSHOT_API_KEY": "${MOONSHOT_API_KEY}",

"MODEL": "kimi-k2.6"

}

}

}

}Built-in Model Configuration

{

"defaultModel": "kimi-k2.6",

"models": {

"kimi-k2.6": {

"provider": "moonshot",

"apiKey": "${MOONSHOT_API_KEY}",

"capabilities": ["code", "long-context", "reasoning"]

},

"glm-5.1": {

"provider": "zhipu",

"apiKey": "${ZHIPU_API_KEY}",

"capabilities": ["code", "tool-use"]

},

"deepseek-v4-pro": {

"provider": "deepseek",

"apiKey": "${DEEPSEEK_API_KEY}",

"capabilities": ["code", "debug", "reasoning"]

}

}

}Cost Comparison: Domestic Models in Agent Scenarios

For Agent workflows requiring high-frequency calls, model selection directly impacts operational costs. Below assumes 100 Agent calls daily, averaging 8000 input + 4000 output tokens per call:

| Model | Input ($/M) | Output ($/M) | Daily Cost | Monthly Cost |

|---|---|---|---|---|

| GLM-5.1 Coding Plan | Subscription | Subscription | — | ¥469 |

| Kimi K2.6 | ~$0.50 | ~$1.00 | ~$0.07 | ~$2.10 |

| DeepSeek V4 Pro | $0.60 | $1.20 | $0.072 | $2.16 |

| Qwen 3.6 Plus | ~$0.30 | ~$0.60 | $0.036 | $1.08 |

| MiniMax M2.7 | $0.30 | TBD | < $0.05 | < $1.50 |

Key finding: Domestic model Agent usage costs are only 1/10 to 1/20 of GPT-5.5, providing economically viable Agent deployment for individual developers and small teams.

Practical Tips

1. Model Routing: Auto-Switch by Task Type

routing:

rules:

- pattern: ".*code generation|write function|implement.*"

model: glm-5.1

reason: "Best code generation stability"

- pattern: ".*analyze.*files|refactor.*project|.*all code.*"

model: kimi-k2.6

reason: "Long context advantage"

- pattern: ".*debug|find.*bug|.*why error.*"

model: deepseek-v4-pro

reason: "Complete reasoning chain"

- pattern: ".*translate|summarize.*"

model: qwen-3.6-plus

reason: "Best cost-performance"2. Fallback Strategy

fallback:

primary: glm-5.1

secondary: kimi-k2.6

tertiary: deepseek-v4-pro

maxRetries: 33. Context Management: Optimizing Domestic Model Context

While domestic models support large context windows, their effective attention range is limited:

- GLM-5.1: Effective context ~64K, recommend chunking for large codebases

- Kimi K2.6: Effective context ~128K, best among domestic models

- DeepSeek V4 Pro: Effective context ~64K, suitable for medium-scale tasks

Model Selection Decision Tree

What is your primary task?

├── Code generation / feature implementation

│ ├── Need stable high-quality output? → GLM-5.1

│ └── Budget-sensitive? → Qwen 3.6 Plus

├── Large codebase / multi-file refactoring

│ └── → Kimi K2.6 (long context)

├── Debug / troubleshooting

│ └── → DeepSeek V4 Pro (complete reasoning)

├── High-frequency Agent loops

│ └── → MiniMax M2.7 (lowest cost)

└── General tasks / daily assistance

└── → Qwen 3.6 Plus (best value)Summary

In H1 2026, the integration of domestic models in Agent frameworks has undergone a qualitative leap. From “API-only connection” to “zero-config one-click integration”, from “barely usable” to “reliably effective”, domestic models are playing an increasingly important role in the Agent ecosystem.

For developers, this means a key shift: you no longer need to rely on a single model vendor. Like choosing a database, you can flexibly combine domestic models based on task type, performance needs, and budget to build optimal Agent workflows.

Sources: