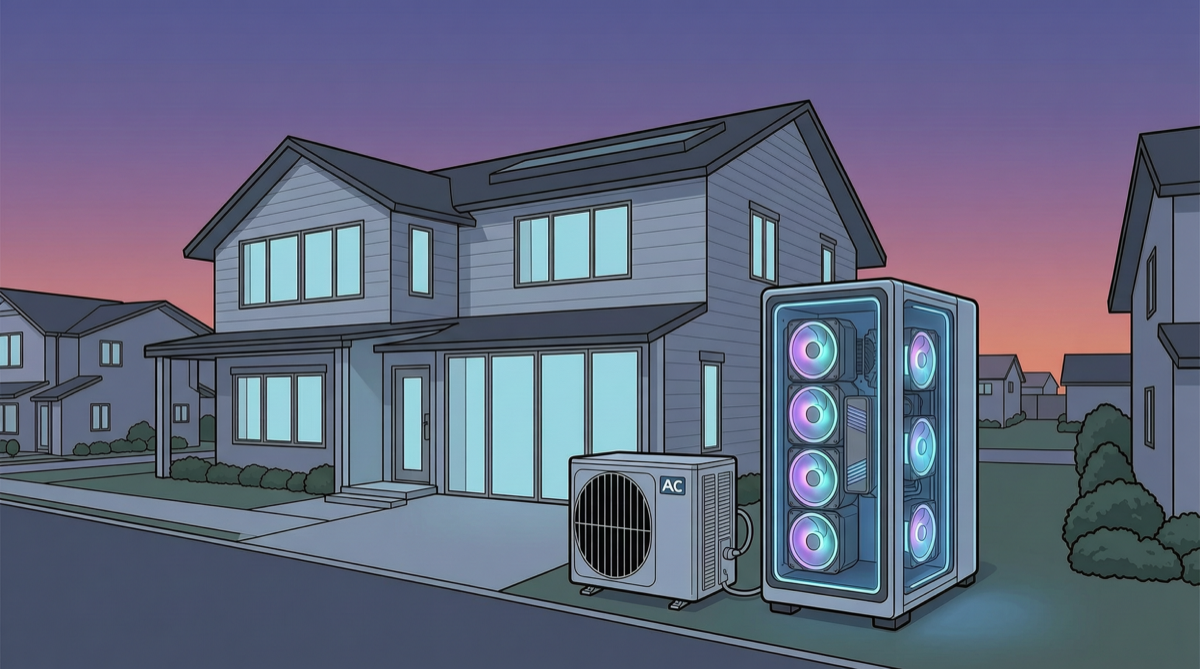

Nvidia just released a scheme that made everyone stop and stare: put an AI data center next to your home’s outdoor AC unit, and pay you to host it.

This isn’t science fiction—it’s Nvidia’s newly launched XFRA (eXtensible Fabric for Residential AI) node solution. Its emergence signals a fundamental shift in AI compute deployment—from centralized data centers to distributed edge networks.

XFRA Node Specifications

| Component | Specs |

|---|---|

| GPU | 16× Blackwell RTX Pro 6000 |

| CPU | 4× AMD EPYC |

| Memory | 3TB RAM |

| Form factor | Dell PowerEdge rack-mounted |

| Deployment location | Next to home AC condenser (outdoor cabinet) |

| Homeowner cost | $0 |

| Homeowner revenue | Revenue share |

A single XFRA node’s total compute is roughly equivalent to a small data center. The FP4 inference performance of 16 Blackwell GPUs is enough to support hundreds of concurrent LLM instances.

Why “Next to the AC Unit”?

This design wasn’t pulled out of thin air:

- Cooling: The AC condenser area already has existing cooling infrastructure—heat from the GPUs can be carried away by the AC system

- Power: Residential power grids typically have ample surplus capacity, especially during daytime solar generation peaks

- Space: Outdoor cabinets don’t take up indoor space; noise is handled by cabinet soundproofing

- Network: Home broadband upstream bandwidth is sufficient for inference workloads—no dedicated lines needed

Business Model

Nvidia’s logic: Rather than spending billions building data centers, distribute compute across thousands of home nodes. Each node’s cost is borne by Nvidia, homeowners get revenue share.

Homeowner’s logic: Zero-cost “backyard money printer”—revenue comes from Nvidia renting compute to AI companies.

AI company’s logic: Access compute cheaper than traditional data centers, since land, construction, and large-scale O&M costs are eliminated.

Landscape Assessment

XFRA’s emergence marks the convergence of three trends in AI compute deployment:

| Trend | Manifestation | Impact |

|---|---|---|

| Decentralization | From data centers to distributed nodes | Lower deployment costs, increased resilience |

| Edge AI | Compute closer to users and data sources | Lower latency, privacy compliance |

| Compute crowdsourcing | Homes become compute providers | New revenue models, new regulatory challenges |

Comparing existing solutions:

| Solution | Deployment Model | Scale | Latency | Use Case |

|---|---|---|---|---|

| Hyperscale cloud (AWS/GCP) | Centralized data center | Massive | Medium | General AI |

| Neocloud (CoreWeave etc.) | Specialized data center | Large | Medium | AI training and inference |

| XFRA | Distributed home nodes | Micro-node | Low | Edge inference, localized AI |

| Local deployment (home PC) | Single machine | Tiny | Lowest | Personal use |

XFRA fills a gap: a “community-scale compute layer” between Neocloud and local deployment.

Potential Challenges

- Regulation: Deploying commercial-grade compute equipment in residential areas may involve zoning laws and noise ordinances

- Network stability: Home broadband upstream bandwidth and reliability aren’t on par with data center dedicated lines

- O&M: Who fixes hardware failures? How complex a fault can homeowners handle?

- Security: Physical security (equipment theft) and cybersecurity (node compromise) both need addressing

- Power load: 16 Blackwell GPUs draw significant power—can residential circuits handle it?

Action Recommendations

| Role | Recommendation |

|---|---|

| Homeowners (US) | Watch for Nvidia XFRA pilot programs—if launched in your area, you can earn passive income at zero cost |

| AI startup founders | Evaluate XFRA node inference costs—they may be lower than Neocloud, suitable for edge inference scenarios |

| Developers | Follow distributed inference framework developments—XFRA will need a software layer to manage cross-node load distribution |

| Investors | Watch for early opportunities in the “decentralized compute” space—similar to DePIN but targeting AI inference |

Nvidia XFRA may be the most radical AI infrastructure experiment of 2026. Whether it succeeds depends on solving O&M and regulatory challenges, but the direction itself—making compute ubiquitous—is already industry consensus.