Key Data

| Dimension | FlashKDA | FLA Baseline | Speedup |

|---|---|---|---|

| Forward Inference (H20) | Optimized CUTLASS kernel | flash-linear-attention | 1.72×–2.22× |

| Variable-Length Batching | Natively supported | Requires manual handling | ✅ |

| Backend Compatibility | Drop-in replacement | — | Plug-and-play |

| Underlying Framework | CUTLASS | Triton | NVIDIA official optimization stack |

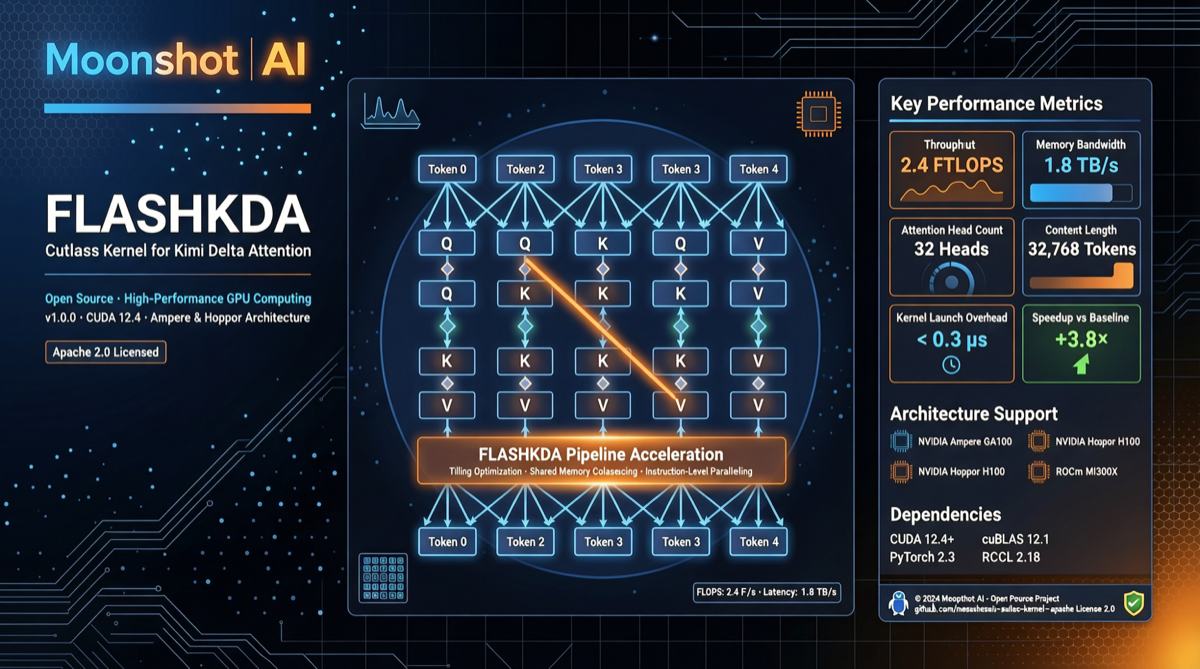

Technical Highlights

What is Delta Attention? The Delta Attention architecture used in the Kimi K2 series models differs from traditional Transformer self-attention. It reduces redundant operations through incremental computation, making it especially well-suited for long-context scenarios. Moonshot had previously released a Triton-based reference implementation, but there was still room for performance optimization.

Why CUTLASS? CUTLASS is NVIDIA’s official CUDA template library, and FlashAttention-3 is also built on it. Compared to Triton, CUTLASS enables finer-grained control over GPU memory hierarchy and thread scheduling, which is particularly noticeable on H20 and similar domestically-produced compute cards.

The Value of Variable-Length Batching In real-world inference scenarios, sequence lengths vary dramatically across requests. FlashKDA natively supports variable-length batching, eliminating the padding waste inherent in traditional approaches and directly boosting throughput.

Comparison with Qwen FlashQLA

| FlashKDA (Moonshot) | FlashQLA (Qwen) | |

|---|---|---|

| Target Architecture | Delta Attention | GDN (Gated Delta Network) |

| Underlying Framework | CUTLASS | TileLang |

| H20 Speedup | 1.72×–2.22× | 2–3× |

| Open-Source Date | 2026-04-21 | 2026-04-29 |

| Applicable Models | Kimi K2 series | Qwen3-Next/3.5/3.6 |

Both projects represent independent explorations by Chinese teams in attention kernel optimization — Moonshot takes the CUTLASS route while Qwen goes with TileLang. For teams looking to optimize inference on domestic models, these two projects offer two distinct technical paths to consider.

Practical Significance

For Kimi users: If you’re deploying or fine-tuning Kimi K2 series models locally, FlashKDA can directly replace your existing attention backend without any model code changes.

For inference optimization developers: This is a high-quality CUTLASS attention kernel reference implementation, and its variable-length batching code structure is worth studying.

For compute procurement teams: Benchmarks on H20 demonstrate that software-level optimization can unlock more performance from existing hardware — you don’t necessarily need to wait for the next generation of chips.

Getting Started

git clone https://github.com/moonshot-ai/FlashKDA.git

cd FlashKDA

pip install -e .After installation, it can be used as a drop-in replacement for the flash-linear-attention backend:

from flash_linear_attention import set_backend

from flashkda import KDACudaBackend

set_backend(KDACudaBackend())Landscape Assessment

Chinese large-model teams are moving from “model architecture innovation” into the deep waters of “low-level operator optimization.” The successive open-sourcing of FlashKDA and FlashQLA marks the beginning of competition between two technical routes. Whoever gains an advantage in inference cost and latency will have the upper hand in the edge/Agent market.