Bottom Line First

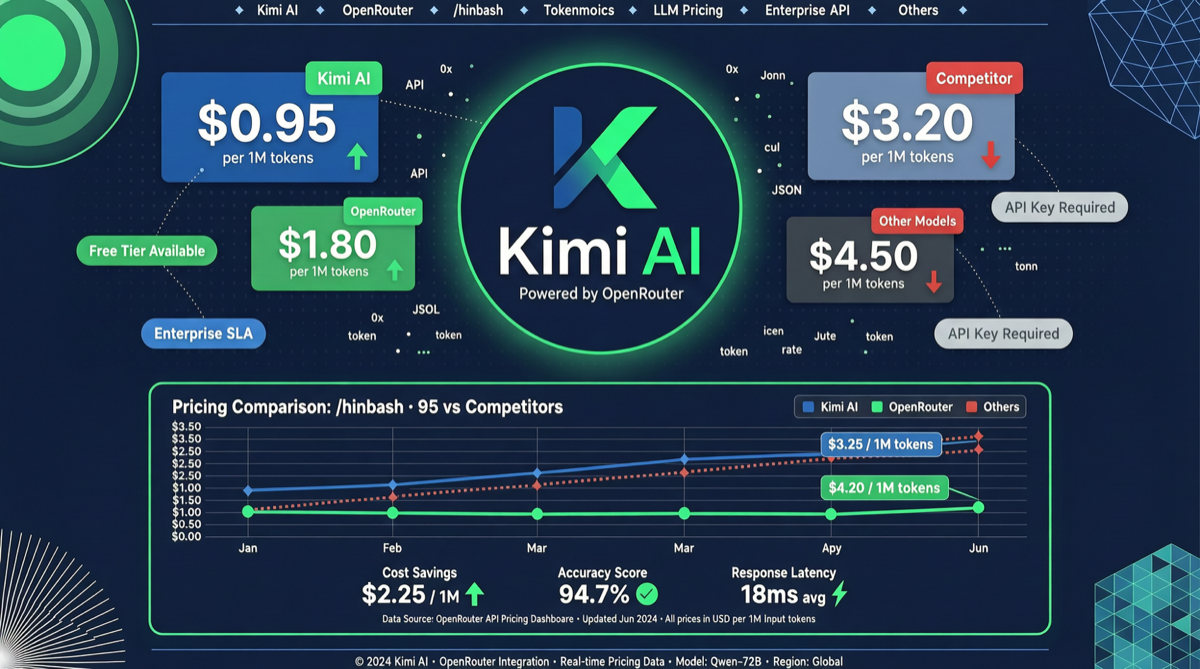

Kimi K2.6 is now live on OpenRouter, priced at $0.95/MTok for input and $4/MTok for output. Compared to Claude Opus 4.7’s $5/$25 pricing, Kimi K2.6 on OpenRouter costs just 19% of Opus for input and 16% for output.

This isn’t just about being “cheap” — it’s the first time a Chinese open-source model has appeared on an international model aggregation platform with such aggressive pricing, sending a clear signal to global developers: frontier-level reasoning capabilities + ultra-low prices have arrived.

Data Comparison: OpenRouter Platform Price War

| Model | Input Price | Output Price | Context Window | Key Capability |

|---|---|---|---|---|

| Kimi K2.6 | $0.95/MTok | $4/MTok | 256K | SWE-Bench Pro leading |

| Claude Opus 4.7 | $5.00/MTok | $25/MTok | 200K | Long reasoning chains |

| GPT-5.5 | $2.50/MTok | $10/MTok | 128K | General capability |

| DeepSeek V4 Pro | $0.375/MTok* | $1.50/MTok* | 128K | 75% discount active |

| Gemini 3 Pro | $1.25/MTok | $5.00/MTok | 1M | Multimodal |

* DeepSeek V4 Pro discounted price valid until May 31

Kimi K2.6’s pricing on OpenRouter sits in the “sub-flagship” tier — more expensive than DeepSeek V4 Pro’s discounted price, but significantly below Claude Opus 4.7 and GPT-5.5.

Why OpenRouter Listing Is a Key Signal

OpenRouter is one of the world’s largest model aggregation platforms, integrating over 200 model APIs. Kimi K2.6’s arrival on OpenRouter means:

- Instant global developer access: No need to register a Moonshot AI account or handle Chinese payment methods — a single OpenRouter API key is all you need

- Integration into developer toolchains: OpenRouter’s cost-comparison tools will display Kimi K2.6 side-by-side with Claude and GPT, making the price gap immediately visible to developers choosing APIs

- International ecosystem integration: Kimi K2.6 on OpenRouter can be called by any agent framework supporting the OpenRouter API (including LangChain, LlamaIndex, OpenClaw, etc.)

The Technical Confidence Behind Kimi K2.6

Kimi K2.6’s bold pricing on OpenRouter is backed by several technical pillars:

- 1 trillion parameters (32B active): MoE architecture keeps inference costs manageable

- SWE-Bench Pro surpassing Claude Opus 4.6: Coding capability validated by benchmarks

- Coordinating 300 sub-agents: Agent swarm capability is K2.6’s differentiator

- 256K context window: Not the 20M super-context version, but sufficient for most API use cases

Action Recommendations

Best use cases for Kimi K2.6:

- API calls requiring frontier-level coding capability (SWE-Bench Pro leading)

- Agent orchestration tasks (300 sub-agent coordination is a unique advantage)

- Budget-conscious scenarios with quality requirements (80%+ cheaper than Claude)

Scenarios requiring caution:

- Ultra-long context needs (256K vs 20M): Use the official Kimi super-context version instead

- Latency-sensitive applications: OpenRouter routing may add additional latency

- Multimodal input needs: K2.6 on OpenRouter primarily provides text API

Cost estimate: A coding task with 10K input tokens + 5K output tokens costs approximately $0.03 with Kimi K2.6 vs $0.175 with Claude Opus 4.7 — a 5.8x difference.