Core Conclusion

In May 2026, the performance gap between open-source AI models and closed-source APIs is disappearing. The latest OpenRouter leaderboard shows Kimi K2.6 already leading the open-source camp in comprehensive capabilities, with GLM 5.1 following closely and DeepSeek V4 Preview catching up. For developers, this sends a clear signal: if you are doing batch processing, asynchronous inference, or cost-sensitive tasks, open-source models can already replace most closed-source API calls.

Performance Benchmarking

OpenRouter Leaderboard Current State

| Model | Type | Overall Rank | Strength Areas | Weakness |

|---|---|---|---|---|

| GPT-5.5 | Closed | #1 | Instruction following, complex reasoning | High API price |

| Claude 4 Opus | Closed | #2 | Long context, code | High API price |

| Kimi K2.6 | Open Source | #3-4 | Chinese understanding, multi-turn dialogue | Inference speed |

| GLM 5.1 | Open Source | #4-5 | Tool calling, Agent | Inference speed |

| DeepSeek V4 Preview | Open Source | #5-6 | Math, code | Still training |

| Gemini 2.5 Pro | Closed | #2-3 | Multimodal | Average Chinese performance |

Key signal: Kimi K2.6 and GLM 5.1 are “insanely close to closed AI in performance” — a consensus among multiple developers.

Speed: The Only Systematic Weakness of Open-Source Models

| Model | Average First Token Latency | Throughput (tokens/s) | Suitable Scenarios |

|---|---|---|---|

| GPT-5.5 | ~500ms | 120-150 | Real-time interaction |

| Claude 4 | ~600ms | 100-130 | Real-time interaction |

| Kimi K2.6 (API) | ~800ms | 80-100 | Near real-time |

| GLM 5.1 (API) | ~900ms | 70-90 | Near real-time |

| Local deployment (A100) | ~300ms | 50-80 | Batch processing |

The speed gap is narrowing: cloud API versions of Kimi/GLM have latency in the 800-900ms range, while local deployment on A100 can be pushed to 300ms. For asynchronous tasks (batch processing, data labeling, content generation), speed is not a problem at all.

Cost Comparison: The Real Driver

Based on processing 1 million tokens per month:

| Solution | Monthly Cost | Cost Per Million Tokens | Notes |

|---|---|---|---|

| GPT-5.5 API | $15-25 | $15-25 | Input + output mixed |

| Claude 4 API | $20-30 | $20-30 | Includes system prompt overhead |

| Kimi K2.6 API | $2-5 | $2-5 | Chinese API price advantage |

| GLM 5.1 API | $2-4 | $2-4 | Extremely cost-effective |

| Local deployment (electricity) | $0.5-1 | ~$0.5 | Hardware cost separate |

Closed-source API costs are 5-15x those of open-source solutions. When the performance gap narrows to within 10%, cost becomes the decisive factor.

Which Scenarios Are Ready to Migrate?

| Scenario | Migration Feasibility | Recommended Solution | Notes |

|---|---|---|---|

| Batch data labeling | ✅ Fully feasible | Kimi K2.6 local deployment | Speed-insensitive |

| Content generation | ✅ Fully feasible | GLM 5.1 API | Good Chinese performance |

| Customer service dialogue | ⚠️ Partially feasible | Kimi K2.6 API | Latency needs evaluation |

| Real-time translation | ⚠️ Partially feasible | Specialized small models | General models have high latency |

| Code generation | ✅ Feasible | Kimi K2.6 + DeepSeek | Open-source performs well in code |

| Complex reasoning chains | ❌ Not recommended yet | GPT-5.5 / Claude 4 | Closed-source still has advantage |

Migration Strategy

Progressive Migration (Recommended)

Phase One: Migrate non-critical tasks

→ Data cleaning, batch summarization, content drafts

→ Use open-source models, keep closed-source for quality spot checks

Phase Two: Gray release for core tasks

→ Customer service, translation, code generation

→ A/B test open-source vs closed-source output quality

Phase Three: Fallback on demand

→ Keep closed-source API as fallback

→ Auto-switch when open-source model fails quality requirementsHybrid Architecture Example

def smart_route(prompt, task_type):

if task_type in ["batch_label", "content_draft"]:

return kimi_client.generate(prompt) # Low cost

elif task_type in ["complex_reasoning", "safety_critical"]:

return gpt_client.generate(prompt) # High quality

else:

return glm_client.generate(prompt) # BalancedIndustry Landscape Judgment

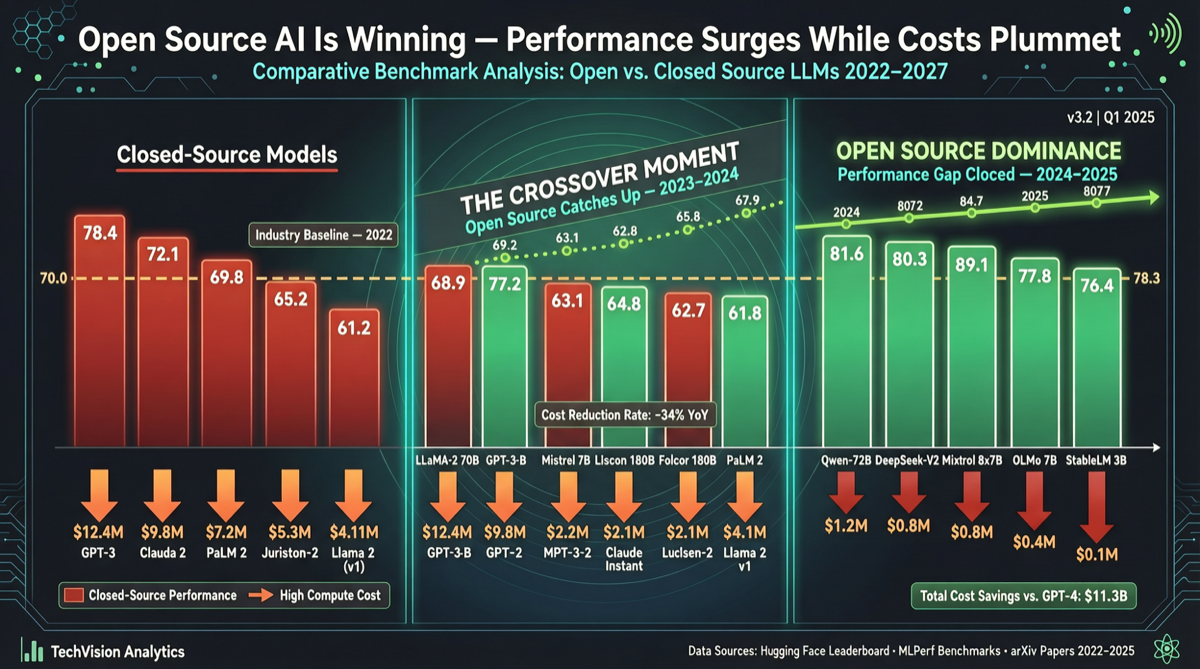

The AI industry is experiencing a replay of the “cloud computing era”:

- Early stage: Closed-source API is the only choice, expensive but best performance

- Now: Open-source models catch up in performance, significant price gap

- Future: Closed-source API retreats to “highest-end scenarios” (real-time interaction, complex reasoning, multimodal), open-source models dominate “large-batch scenarios”

This is not a zero-sum game — API providers will lower prices, open-source models will increase speed, and ultimately users benefit.

Action Items

- Today: Review your API bill, identify the usage scenarios that account for 80% of costs

- This week: Replace 20% of non-critical calls with Kimi K2.6 or GLM 5.1 API

- This month: If you have GPU resources, deploy local inference service to further reduce costs

- Continuously: Follow OpenRouter leaderboard, track open-source model performance changes

When open-source model performance gap shrinks to “imperceptible” while cost gap remains “visible to the naked eye,” migration is no longer a technical question, but a business decision.