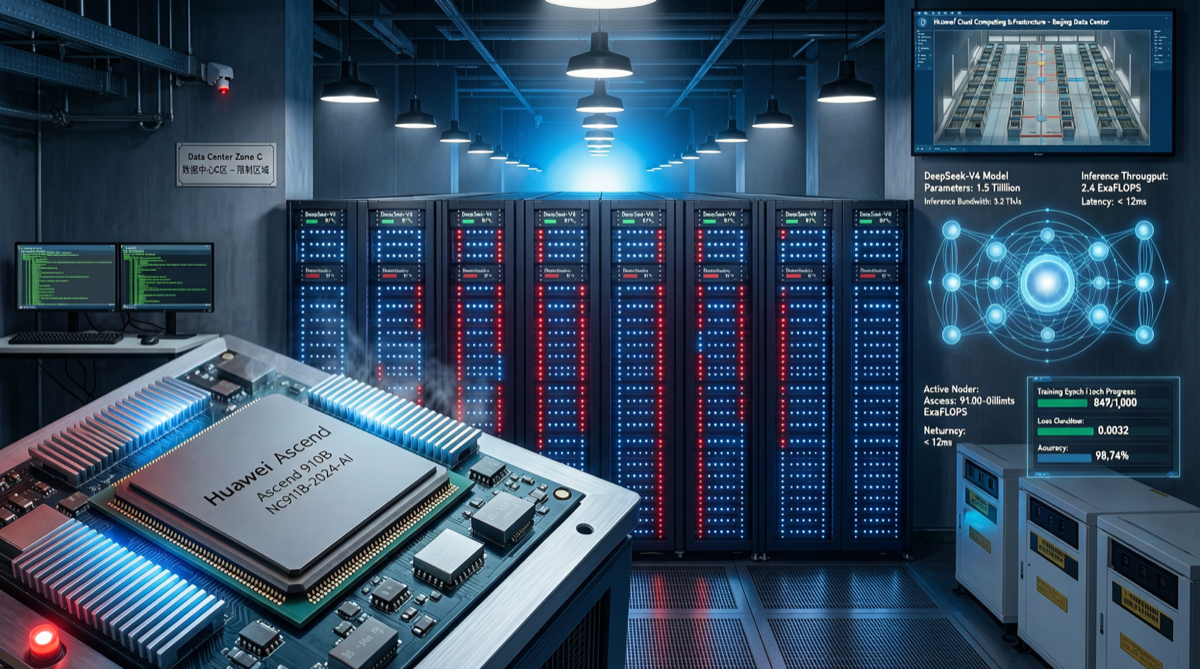

When DeepSeek V4 officially launched on April 24, 2026, the tech world focused on its trillion-parameter MoE architecture and pricing at 1/166th of GPT-5.5. But an overlooked detail is the real signal: DeepSeek is systematically migrating its training and inference infrastructure to Huawei Ascend chips.

The Chip Truth Behind the Delayed Release

DeepSeek V4 arrived weeks later than the market expected. A CCTV-affiliated social media account revealed that the delay was not due to technical issues with the model itself, but because DeepSeek was undergoing a massive underlying compute architecture transition.

| Phase | Compute Source | Strategic Intent |

|---|---|---|

| DeepSeek V3 Era | NVIDIA A100/H100 (existing stock) | Utilize pre-export-control inventory |

| DeepSeek V4 Training | Ascend 910B + NVIDIA hybrid | Transition period, validate domestic compute feasibility |

| DeepSeek V4 Inference | Ascend chips as primary | First complete domestic substitution on inference side |

| DeepSeek V5 Planning | Ascend + full domestic chip stack | Full autonomy on training side |

This is not a PR narrative. DeepSeek’s choice is a blend of necessity and initiative: U.S. export controls make acquiring high-end NVIDIA chips unreliable, but simultaneously, the Ascend 910B’s performance iteration has just reached a viable threshold.

Ascend 910B: From “Usable” to “Good”

The key progress of Ascend 910B is not about single-card performance surpassing H100—it doesn’t—but about ecosystem maturity.

Software Stack Breakthroughs:

- CANN 8.0’s compatibility layer for PyTorch has significantly improved, reducing migration costs from “rewrite most code” to “modify some operators”

- MindSpore framework’s stability in large-scale distributed training has been validated through the V4 training cycle

- Ascend and DeepSeek’s MoE architecture have a special optimization path—the sparse activation patternprecisely matches Ascend’s memory bandwidth characteristics

The Cost Equation:

- Ascend cluster per-token training cost is approximately 60-70% of NVIDIA solutions

- But factoring in supply chain security and exchange rate risk, the long-term TCO gap is even larger

- DeepSeek’s extreme efficiency engineering (37 billion activated parameters / 1 trillion total parameters) effectively mitigates Ascend’s memory bottleneck

AI Supply Chain Restructuring Under Geopolitics

The U.S. State Department’s diplomatic cable issued in April 2026, accusing DeepSeek, Moonshot AI (Kimi), and MiniMax of “distilling” capabilities from U.S. models, combined with chip export controls, is accelerating the de-Americanization process of Chinese AI companies.

Three Converging Forces:

- Export Controls: NVIDIA’s China-special chips (H20) have limited performance and declining cost-effectiveness

- Domestic Substitution: Ascend 910B iteration + Hygon DCU + Cambricon, multiple technology routes running in parallel

- Model Efficiency Revolution: MoE, quantization, and distillation technologies reduce dependence on absolute compute power

DeepSeek’s choice has a demonstration effect. If China’s number-one open-source model company can train world-class models on Ascend, other companies’ willingness to migrate will increase substantially.

Actionable Advice for Readers

For Developers:

- If your services target the Chinese market, you should start testing model deployment on the Ascend platform now

- DeepSeek V4’s Ascend-optimized version is expected in Q2 2026—early adaptation can avoid later migration costs

- Watch Huawei Ascend community toolchain updates—CANN’s iteration speed is accelerating

For Enterprise Decision-Makers:

- Compute supply chain diversification is no longer “optional” but “mandatory”

- When evaluating model providers, include “chip supply chain security” as a consideration dimension

- DeepSeek V4 Pro discount is extended through May 31—now is the window to lock in low-cost API access

For Investors:

- Beneficiaries of the Ascend ecosystem extend beyond Huawei to include server manufacturers, software adaptation layer companies, and compute operators

- The investment logic for Chinese AI chip self-reliance is transitioning from “concept” to “earnings validation”

Landscape Assessment

DeepSeek V4’s Ascend pivot is not an isolated event—it’s amicrocosm of a broader trend. In the second half of 2026, we expect to see more Chinese AI companies publicly announce their domestic chip adaptation plans. This is not just a technical choice, but a strategic decision about supply chain security.

The real competition is not at the model level, but at the compute infrastructure level. Whoever can access compute power at lower cost and with greater reliability will have the upper hand in the next round of model iterations. DeepSeek has answered with action: don’t wait for others to give you chips—build your own road.