Key Takeaway

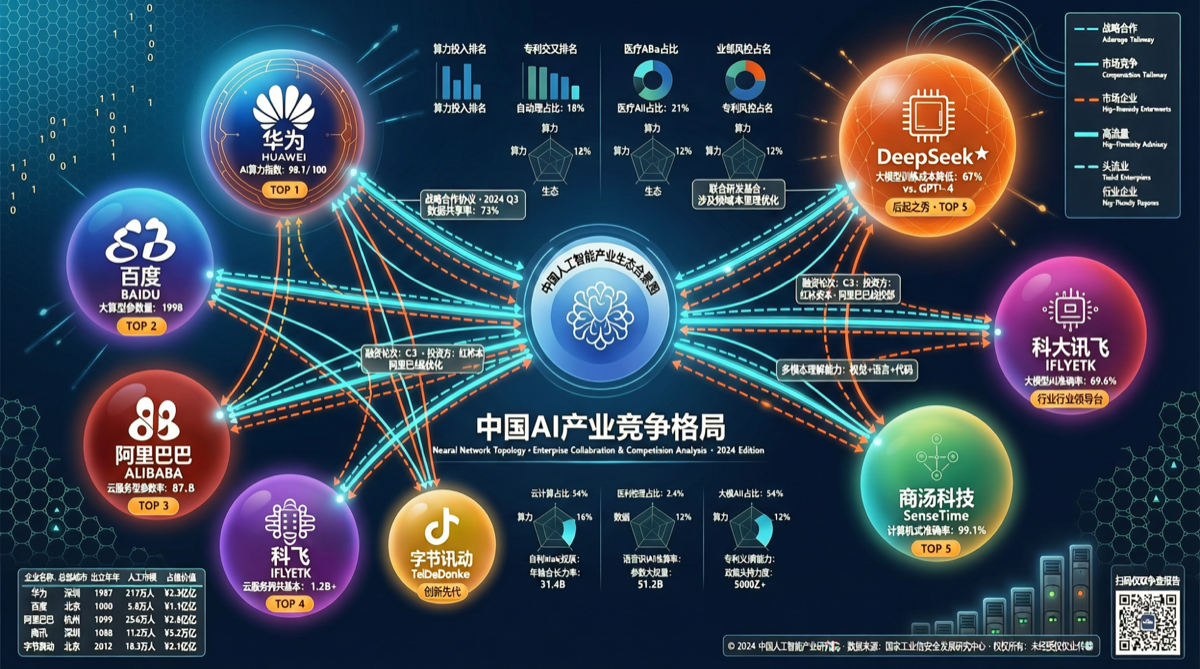

As of late April 2026, China’s AI model market has formed a nine-way competitive landscape, each choosing different breakthrough paths:

| Company | Flagship Model | Strategy | Differentiation |

|---|---|---|---|

| Alibaba | Qwen 3.6 Series | Open source ecosystem | MoE + Dense dual track |

| DeepSeek | V4 Series | Structural innovation | Native Chinese-chip training + low cost |

| Baidu | ERNIE 5.1 | Inference cost-efficiency | MoE slimming + Arena climbing |

| Zhipu | GLM 5.1 | Full-stack self-developed | Coding + reasoning excellence |

| Moonshot | Kimi K2.6 | Open source + long context | Design Arena champion |

| Xiaomi | MiMo-V2.5 | Hardware+AI synergy | MIT license + 100T free tokens |

| MiniMax | M2.7 | Self-evolution | Self-evolving architecture |

| SenseTime | SenseNova U1 | Multimodal unification | NEO-Unify architecture |

| Tencent | Hunyuan 3 | Ecosystem integration | WeChat/Tencent Cloud deep integration |

Two structural changes deserve attention.

Change 1: Open Source Becomes the Main Battleground

April’s China AI model community was most active in open source. Qwen 3.6 series dominated discussions after releasing MoE and Dense models in late February, followed by Qwen3.6-35B-A3B on April 15, and Qwen3.6-27B at month-end that ignited the open source community — small models activating only 3B parameters while delivering 35B-level performance.

Meanwhile, Xiaomi MiMo-V2.5 was open-sourced under MIT license, offering 100T free tokens to developers. DeepSeek V4 completed large-scale training on domestic chips, proving that “structural optimization reduces training costs.”

Open source has evolved from a “marketing tool” to an “ecosystem strategy” — whoever attracts the most developers gains the most feedback and data for next-generation model iteration.

Change 2: Compute Gap Remains Real

Despite DeepSeek proving large model training on domestic chips is possible, industry consensus holds:

“DS’s structural optimization reduced training costs and trained large models on domestic chips — this importance is undeniable. But it doesn’t mean the compute gap will close quickly.”

Compute remains the core bottleneck constraining Chinese AI companies. Response strategies:

| Company | Compute Strategy |

|---|---|

| DeepSeek | MoE architecture + sparse attention, reducing training compute |

| Qwen | Small activation parameter models (3B/5B/8B), improving inference cost-efficiency |

| Baidu | MoE slimming, parameters compressed to 1/3 of previous generation |

| Xiaomi | Cloud+device synergy, offloading some inference to phone chips |

Change 3: Talent Acceleration

The talent earthquake triggered by Qwen’s core technical lead departure continues to ripple. Other companies are experiencing similar talent competition:

- Model researchers flowing from top companies to startups

- Active open source contributors becoming recruitment targets

- Overseas Chinese AI talent returning at accelerated pace

International Comparison

| Dimension | China | US |

|---|---|---|

| Major Companies | 9+ | 5 (OpenAI, Anthropic, Google, Meta, xAI) |

| Open Source Ratio | High (7/9 flagship open) | Medium (Meta leads) |

| Model Iteration Speed | 2-3 months/generation | 1-2 months/generation |

| Compute Autonomy | Medium (domestic chips replacing) | High (NVIDIA + custom chips) |

| Commercialization Maturity | Medium | High |

Actionable Advice

For developers:

- Best current open source: Qwen 3.6 series (most mature ecosystem), MiMo-V2.5 (most permissive license)

- Agent development: DeepSeek V4 + domestic chips = highest cost-efficiency local deployment

For enterprise users:

- API selection: Qwen3.6-Plus (excellent coding agent benchmarks), Kimi K2.6 (long context scenarios)

- Local deployment: MiMo-V2.5 (MIT license, no commercial restrictions), Qwen3.6-27B (strongest community support)

For investors:

- Watch companies with compute autonomy (DeepSeek, Baidu)

- Watch most active open source ecosystems (Qwen, Xiaomi)