What Happened

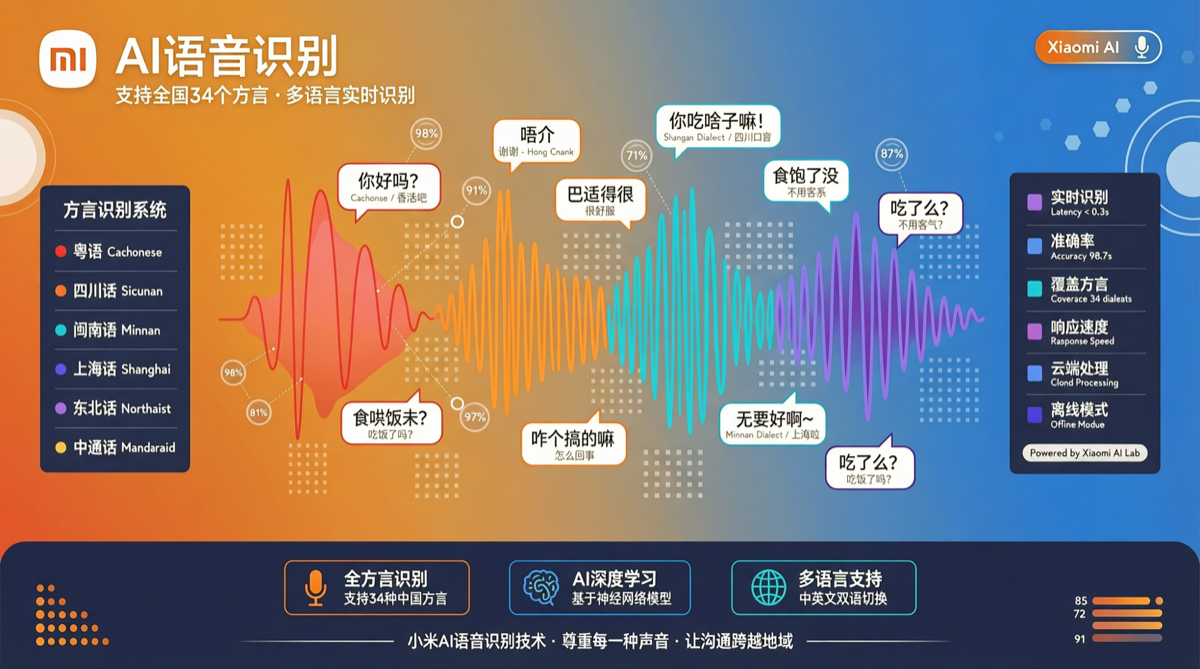

Xiaomi open-sourced MiMo-V2.5-ASR on April 30 — an open-source model focused on speech recognition (ASR). Unlike the previous MiMo-V2.5 series, this release targets a specific capability: high-quality speech-to-text with native support for multiple Chinese dialects.

| Capability | Description |

|---|---|

| Mandarin | Standard Chinese speech-to-text |

| English | Standard English speech-to-text |

| Wu | Shanghai, Suzhou dialects |

| Cantonese | Guangdong dialect |

| Minnan | Fujian, Taiwan Minnan |

| Sichuanese | Southwest Mandarin |

| Song Recognition | Voice content with music |

| Noisy Environments | Robust recognition in noisy scenes |

| Multi-Speaker | Simultaneous multi-speaker recognition |

Why Dialect Recognition Is Hard

Differences between Chinese dialects can sometimes exceed differences between European languages:

- Cantonese has 6-9 tones (vs. Mandarin’s 4), completely different tone system

- Wu retains many Middle Chinese entering tones and voiced consonants

- Minnan has vastly different phonology from Mandarin, many words lack Mandarin equivalents

Existing ASR models (including well-known open-source solutions like Whisper) typically see significant performance drops in dialect scenarios. The reason: training data is dominated by Mandarin, and dialect data scarcity and annotation costs lead most teams to give up.

Xiaomi’s advantage: MIUI/HyperOS covers hundreds of millions of Chinese users, providing natural dialect speech data sources.

Technical Highlights

1. Unified Architecture, Multi-Language/Dialect Sharing

MiMo-V2.5-ASR uses a unified multi-language/dialect model architecture, not separate models per dialect:

- One model handles all dialects, no switching needed

- Knowledge transfers between dialects (e.g., shared phonetic features between Cantonese and Minnan)

- Deployment costs dramatically reduced

2. Noise and Music Scenarios

Supporting “song recognition” is noteworthy. Speech recognition under music background is a classic ASR challenge — the acoustic encoder must separate vocals from mixed signals and recognize lyrics. MiMo-V2.5-ASR handling this indicates its acoustic feature extraction has reached a high level.

3. Multi-Speaker Recognition

Traditional ASR assumes single speaker. Multi-speaker requires:

- Speaker diarization

- Speaker switch detection

- Independent tagging per speaker

MiMo-V2.5-ASR natively supports this without third-party tools.

Comparison with Existing Open-Source ASR

| Solution | Dialect Support | Multi-Speaker | Noise Robustness | Song Recognition | License |

|---|---|---|---|---|---|

| Whisper | Limited | No | Medium | No | MIT |

| FunASR | Partial | Yes | Good | No | Apache 2.0 |

| MiMo-V2.5-ASR | 6+ dialects | Yes | Good | Yes | TBD |

Action Recommendations

If you’re a developer:

- Watch the GitHub repo for license terms (determines commercial viability)

- Test your dialect data, especially niche dialects

- Evaluate integration into existing speech pipelines

If you’re a product manager:

- Dialect ASR has clear user demand in China (hundreds of millions of dialect speakers)

- Consider dialect support in customer service, content moderation, subtitle generation

Based on Xiaomi MiMo-V2.5-ASR release info and open-source community discussion.