Core Conclusion

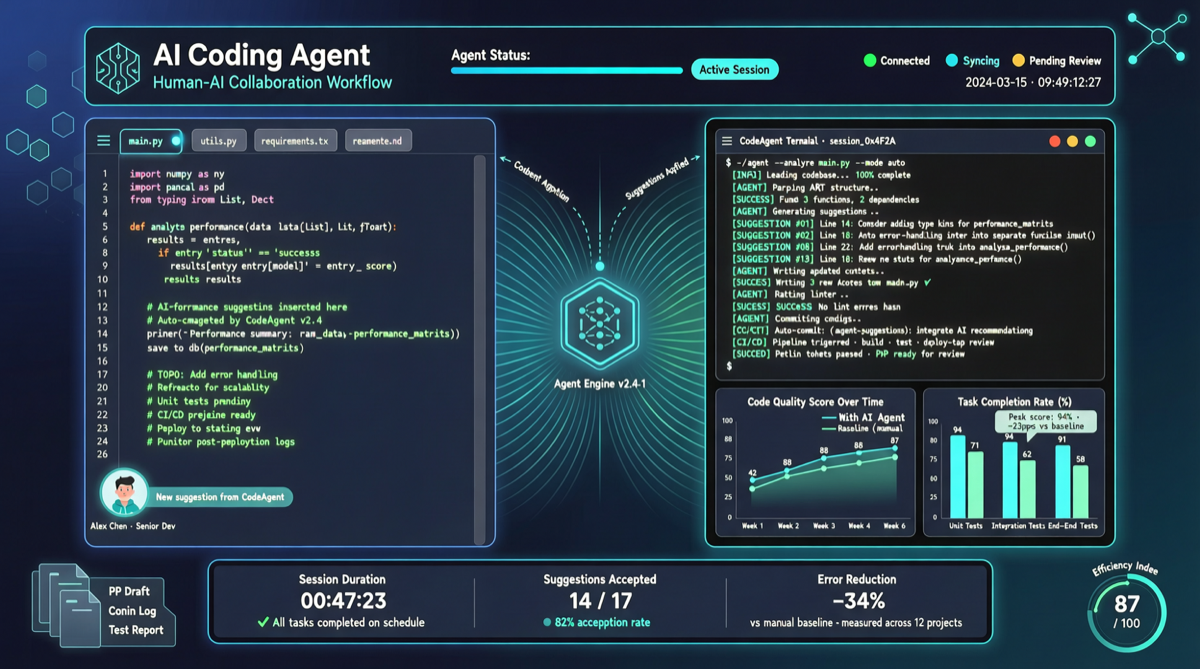

“SWE-chat: Coding Agent Interactions From Real Users in the Wild” releases an unprecedented dataset: 6,000 real developer coding Agent sessions with complete prompts, tool call records, and line-level human vs Agent code attribution.

This is the first large-scale study analyzing coding Agent behavior from “actual usage” rather than “benchmark” perspective.

Dataset Overview

| Dimension | Data |

|---|---|

| Sessions | 6,000+ |

| Developers | Real engineers from multiple companies |

| Recorded | Prompts, tool calls, code modifications, final results |

| Granularity | Line-level human vs Agent code attribution |

| Tools covered | Major coding Agents (Claude Code, Cursor, GitHub Copilot, etc.) |

Key Findings

1. Agent Autonomy Highly Depends on Task Type

| Task Type | Agent Autonomy Rate | Typical Scenario |

|---|---|---|

| Simple refactoring | 75-85% | Variable renaming, function extraction, formatting |

| Bug fixing | 55-70% | Known error message fixes, boundary condition handling |

| New feature implementation | 40-55% | Medium complexity feature modules |

| Architecture design | 15-30% | System design, tech selection, module decomposition |

Key insight: Agents excel at “well-defined” tasks but need significant human intervention for “ambiguous requirements” and “architecture decisions.”

2. Tool Call Patterns Reveal Workflow Bottlenecks

- File reading dominates (~40%): Agents spend significant time understanding existing code

- Code editing in middle (~35%): Actual code modification

- Test execution low (~15%): Agents proactively running tests less than expected

- Search/doc queries (~10%): API documentation or Stack Overflow lookups

This suggests the bottleneck is not code-writing ability but efficiency of understanding existing codebases.

3. Main Triggers for Human Intervention

- Agent enters loops (highest proportion): Repeatedly modifying same code without passing tests

- Beyond training data scope: Using frameworks or libraries the Agent hasn’t seen

- Requirement changes: Needs change during development, Agent can’t self-adjust

Implications for Agent Framework Design

Short-term optimizations

- Loop detection: When Agent edits same file >N times, proactively request human intervention

- Pre-load codebase index: Reduce file reading token costs via pre-built code graphs

- Define failure boundaries: Graceful degradation when Agent enters “beyond capability” tasks

Long-term directions

- Agent autonomy measurement standardization: SWE-chat’s line-level attribution method can become industry standard

- Hybrid workflows: Humans handle architecture and direction, Agents handle implementation details

- Continuous learning: Extract patterns from SWE-chat for training coding-specific reward models

Landscape Judgment

SWE-chat marks a shift from “benchmark score-chasing” to “real usage analysis.” The gap between SWE-bench scores (78.8%) and real-world autonomy rates (40-55%) comes from the difference between benchmark’s “well-defined” and real development’s “ambiguous and changing.”

Action Recommendations

| Your Role | Action |

|---|---|

| Coding Agent users | Optimize workflow: let Agents do simple refactoring and bug fixes, humans focus on architecture |

| Agent framework devs | Integrate loop detection and graceful degradation to reduce token waste |

| Researchers | Use SWE-chat to train reward models better aligned with real scenarios |

| Tech managers | Set realistic Agent expectations based on dataset autonomy rates |

Dataset access: Available via paper’s accompanying link, complete with session records and annotations.