What One Billion Downloads Means

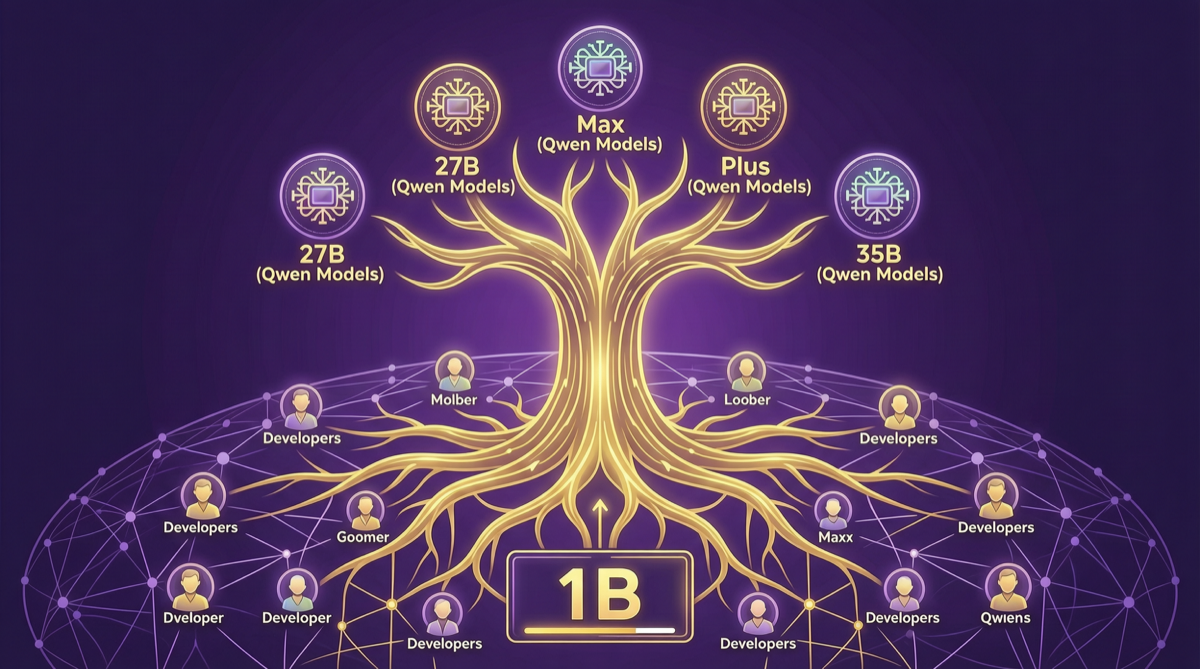

The Qwen model series has surpassed 1 billion cumulative downloads on Hugging Face. This number is not just a victory for Alibaba’s open source strategy — it marks a new height of influence for Chinese models in the global open source community.

For comparison, the Llama series took a longer time to reach a similar milestone. Behind Qwen’s growth speed lies a carefully thought-out open source ecosystem strategy.

Qwen3.6 Family: Precision Strikes from 27B to 35B-A3B

Qwen3.6’s product line design reflects Alibaba’s precise understanding of developer needs:

Qwen3.6-27B: The Ultimate Choice for Home GPUs

The 27B parameter Qwen3.6 is the “sweet spot” model for home consumer GPUs. It can be fully loaded and run on a single RTX 4090, while performing excellently on benchmarks like AIME 2025 mathematical reasoning. For developers who want to deploy high-quality models locally but don’t want to purchase enterprise-grade GPUs, this is a near-perfect choice.

Qwen3.6-Max-Preview: The Efficiency Revolution of MoE Architecture

Max-Preview adopts a Mixture of Experts (MoE) architecture, significantly reducing inference costs while maintaining top-tier reasoning capabilities. The core idea of this architecture is: although the model is massive, only a portion of “expert” modules are activated per inference. This means you can get flagship-model-level performance at near-small-model costs in the cloud.

Qwen3.6-Plus: The Workhorse for Agentic Workflows

The Plus version focuses on Agent work scenarios. It has been specifically optimized for tool calling, multi-step reasoning, and long-context understanding, making it an ideal foundation for enterprises building AI Agent applications. Integration with cloud platforms like Together AI has significantly boosted Plus’s global availability.

Qwen3.6-35B-A3B: A Refined Variant of MoE Architecture

35B-A3B is another noteworthy design in the Qwen3.6 family. Of the 35B total parameters, only 3B are activated per inference. This extreme sparsity allows it to maintain 35B-level knowledge capacity while achieving inference speeds close to a 3B model. For online service scenarios requiring high throughput, this architecture offers significant cost advantages.

Scope: Open Source Interpretability Toolkit

The Scope toolkit, open-sourced by the Qwen team, is an important contribution to the open source AI field in 2026.

Scope provides a set of model interpretability analysis tools based on SAE (Sparse Autoencoder) technology, helping developers understand the representation mechanisms within models. This is scarce in the open source community — most model teams only publish benchmark scores without providing tools for in-depth analysis of model behavior.

The openness of Scope means:

- Developers can audit model decision processes, improving AI system transparency

- Researchers can conduct deeper interpretability research based on Scope

- Enterprise users can better evaluate model reliability in specific scenarios

Qwen Ecosystem’s Developer Community

Behind the one billion downloads lies an active developer community:

- Hugging Face community: Qwen models are among the most forked and discussed non-Llama models on Hugging Face

- Model adaptation: Ollama, LM Studio, llama.cpp and other mainstream inference frameworks natively support Qwen

- Enterprise adoption: Multiple Chinese tech companies have built their own vertical industry models based on Qwen

- Academic research: Qwen is one of the most widely used open source models in AI safety, alignment, and interpretability research

Challenges and Concerns

Despite Qwen ecosystem’s strong overall momentum, it faces some challenges:

Talent Drain

The Qwen core team has experienced a degree of talent mobility recently. Multiple core researchers and engineers have left Alibaba to join other AI companies or start their own ventures. This is a test of Qwen’s sustained innovation capability. However, Alibaba’s open source community foundation is deep enough that even with team changes, the community’s autonomous evolution capability remains.

US Investigation Pressure

The US Congress is conducting investigations into Chinese AI models, and Qwen, as the most influential Chinese open source model globally, inevitably receives attention. This may affect Qwen model availability on certain overseas cloud platforms and international enterprise adoption willingness.

Intensifying Competition

The rise of DeepSeek V4 Pro and Kimi K2.6 has intensified competition within the Chinese model ecosystem. While this is good for the industry as a whole, it means Qwen needs to maintain its current pace of innovation or risk being overtaken.

The Strategic Significance of Qwen Ecosystem

From a broader perspective, Qwen’s one billion downloads represents a key turning point in the globalization of Chinese models:

In the past, Chinese models primarily served the domestic market, with open source as a side effect.

Now, Qwen targets the global open source community at its core, reaching developers worldwide through platforms like Hugging Face, forming a positive cycle of “open source → community feedback → model improvement → more adoption.”

In the future, if Qwen can maintain its current pace of innovation, it has the potential to become an indispensable part of global AI infrastructure — much like Linux’s position in operating systems.

Action Recommendations

- Individual developers: If you’re looking for an open source model to run locally, Qwen3.6-27B is currently one of the best choices. Combined with Ollama, you can deploy it on your Mac or Linux machine within minutes.

- Enterprise teams: Qwen3.6-Plus’s Agentic capabilities are already sufficient to support most enterprise-level AI application development. Combined with the Scope toolkit, you can thoroughly evaluate model reliability before deployment.

- Researchers: The Scope toolkit provides rare infrastructure for interpretability research. Leveraging Qwen’s open source weights and Scope’s analysis capabilities can produce valuable research results.

- Investors: Qwen ecosystem’s influence is a significant asset of Alibaba’s AI strategy. Monitoring Qwen community health and talent retention can indirectly assess Alibaba’s competitiveness in the AI domain.