If you are still using the “chunk → embed → vector DB → similarity search” RAG pipeline, PageIndex might be the most important wake-up call of 2026.

The Pain Point

Every step in the traditional RAG pipeline loses information:

- Chunking: Cutting coherent documents into fragments, breaking contextual relationships

- Embedding: Compressing semantics into fixed-dimension vectors, losing details

- Vector DB retrieval: Recall based on cosine similarity, but “similar” does not equal “relevant”

- Context assembly: Feeding fragments into prompts, forcing the LLM to piece together complete meaning

PageIndex’s approach: Why not let the LLM browse the entire document structurally, like a human would?

The PageIndex Solution

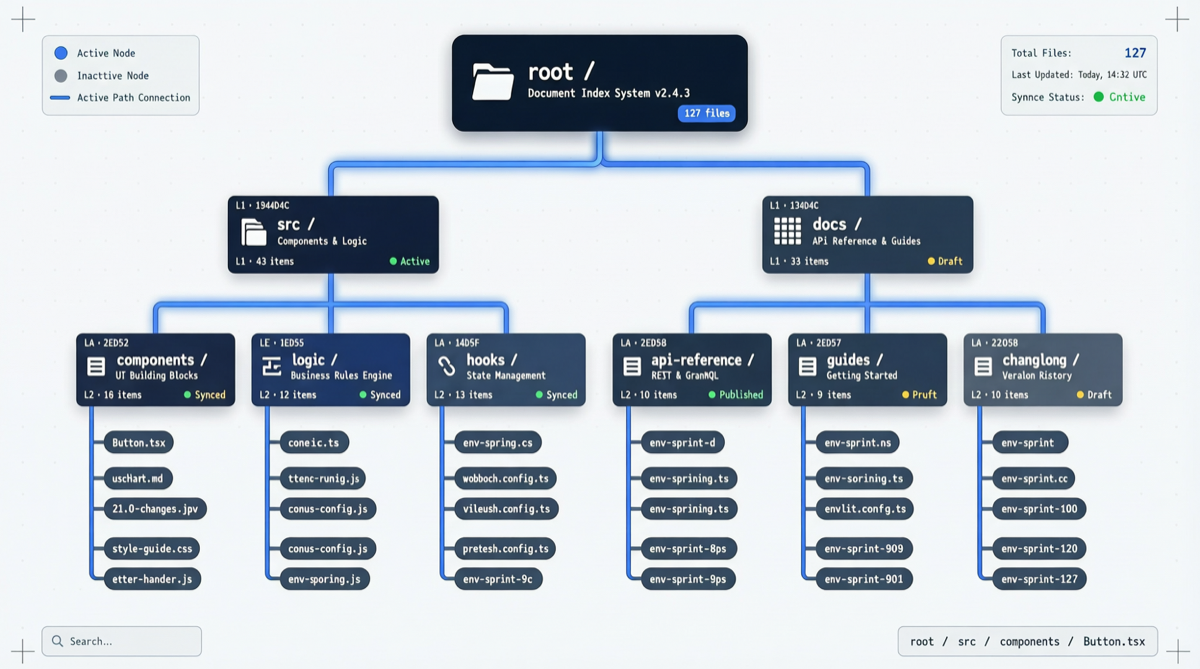

PageIndex’s core mechanism is a document tree index:

- Build a hierarchical tree structure over documents (chapters → sections → subsections)

- The LLM starts from the root node and navigates layer by layer to relevant leaf nodes

- At each step, the LLM autonomously decides which branch to explore next

- Ultimately, it reads only the most relevant complete content segments, rather than fragmented chunks

This process completely bypasses embedding and vector search, letting the model locate information like flipping through a book’s table of contents.

Data Comparison

| Dimension | Traditional RAG | PageIndex |

|---|---|---|

| Vector DB required | Yes (Pinecone/Milvus, etc.) | No |

| Embedding model required | Yes | No |

| Chunking required | Yes | No |

| Similarity search required | Yes | No |

| FinanceBench | ~80-85% | 98.7% |

| Long document processing | Information fragmentation | Preserves hierarchical structure |

| Deployment complexity | Multi-component (embedder + vector DB + retriever) | Single component |

The 98.7% FinanceBench score surpasses all vector-retrieval-based RAG approaches. This is not a marginal improvement — it is a methodological crushing victory.

Why Now?

PageIndex’s success depends on two prerequisites that only truly matured in 2026:

- LLM context windows are large enough: 1M+ token contexts allow models to process entire document trees simultaneously

- LLM navigation capability is strong enough: Models need to make multi-step decisions on tree structures, choosing the correct branch at each step

In other words, PageIndex does not “not need LLMs” — it “needs stronger LLMs.” When models are smart enough, traditional embedding and vector search become unnecessary intermediate layers.

Getting Started

# Install

pip install pageindex

# Basic usage

from pageindex import PageIndex

# Build document index

index = PageIndex.from_documents([

"financial_report_2026.pdf",

"annual_summary.md"

])

# Query (LLM autonomously navigates tree structure)

result = index.query("What were the main revenue growth drivers in Q1 2026?")Applicable Scenarios and Limitations

| Suitable for | Not suitable for |

|---|---|

| Long documents (reports, manuals over 100 pages) | Short text collections (social media posts, brief reviews) |

| Structured documents (with clear chapter hierarchy) | Unstructured text streams |

| Finance/legal scenarios requiring high precision | Real-time search requiring extremely low latency |

| Teams wanting to reduce infrastructure dependencies | Teams with mature vector DB pipelines performing well |

Three-Judge Assessment

Increment: A RAG approach that completely skips embedding + vector DB + chunking has not been validated at scale before. The 98.7% FinanceBench score is a real achievement.

Noise: Currently only detailed data on FinanceBench; performance on other benchmarks (HotpotQA, 2WikiMultihopQA) has not been published. Tree index construction costs on ultra-large document sets remain to be verified.

Signal: The X tweet with 5,775 likes and 9,809 bookmarks demonstrates strong community interest. When “RAG without vector databases” becomes a topic center, vector database vendors need to seriously reconsider their product positioning.

Sources: PageIndex GitHub | X/Twitter Discussion