The most awkward phase of enterprise AI adoption is not “can’t do it” but “can demo it but can’t deploy it.” Mistral AI’s Workflows, launched today, targets exactly this gap.

Bottom Line

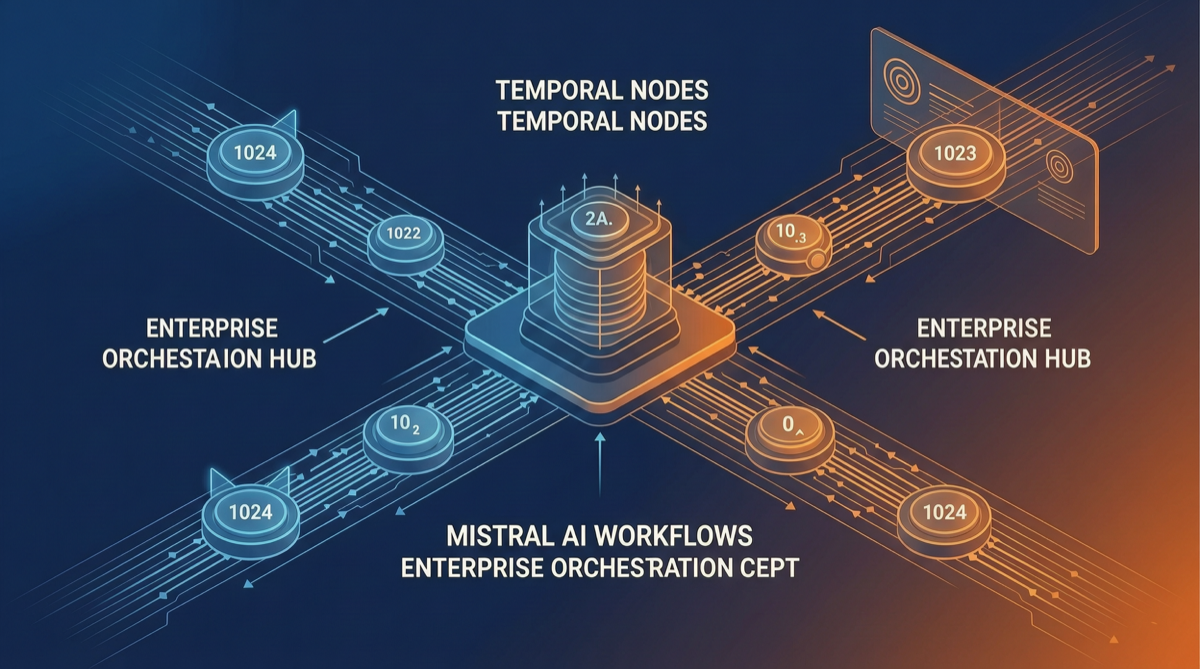

Mistral Workflows is not another Agent framework—it is a durable orchestration layer that sits below LangChain or Hermes, ensuring AI workflows don’t fail in production due to network issues, model timeouts, or human intervention. Built on the Temporal engine, already running in real production at ASML and France Travail.

What Mistral Workflows Does

Core Capabilities

| Capability | Problem Solved | Implementation |

|---|---|---|

| Durable Execution | AI workflows resume from checkpoint after interruption | Built on Temporal, automatic state persistence at every step |

| Full Observability | Branches, retries, state changes all logged | Built-in event tracking and timeline replay in Studio |

| Human-in-the-Loop | Critical decisions require human review | One line of code pauses workflow, resumes after approval |

Why Temporal

Temporal is the open-source successor to Uber Cadence, used by Netflix, Stripe, and others for production-grade microservice orchestration. Mistral’s choice sends a clear signal: AI workflows need the same reliability guarantees as microservices.

First Customer Use Cases

ASML (Semiconductor Equipment Giant):

- AI workflows integrated into chip manufacturing quality inspection

- Human-in-the-loop ensures critical judgments are reviewed by engineers

- Durable execution ensures long-running inspection processes don’t fail

France Travail (French Employment Agency):

- Workflows orchestrate resume matching and job seeker recommendations

- Observability tracks the effectiveness of each decision branch

- Human approval added at points involving personal data processing

Why This Matters

Enterprise AI’s “POC Trap”

Many enterprise AI projects in 2025-2026 are stuck at the POC stage. The problem isn’t model capability—it’s:

- State Loss: Agent crashes halfway, all progress is lost

- No Observability: When things go wrong, no one knows which step, which model, which call failed

- No Human Collaboration: Critical scenarios need human review, but no standardized pause/resume mechanism

Mistral Workflows fires directly at all three pain points.

Differentiation from Existing Solutions

| Solution | Positioning | Durability | Observability | Human Loop |

|---|---|---|---|---|

| LangGraph | Agent graph orchestration | Self-implemented | Requires LangSmith | Self-implemented |

| OpenAI Swarm | Multi-agent collaboration | None | None | None |

| CrewAI | Role-based specialization | Limited | Limited | Via plugins |

| Mistral Workflows | Production orchestration | Built-in | Built-in | One line of code |

Strategic Significance for European AI

In an AI infrastructure layer dominated by Claude and GPT, Mistral carves out a unique position through Workflows:

- Not competing directly on model capability (already backed by Mistral Medium 3.5 128B)

- Building barriers at the enterprise integration layer—once companies run critical processes on Mistral Workflows, migration costs are prohibitive

- Using Temporal’s mature ecosystem to reduce enterprise procurement risk

Action Recommendations

If You’re Already Using Mistral Models

- Evaluate whether Workflows solves your current production pain points: Do you have workflow failures due to interruptions? Do you need human approval?

- Pilot on non-critical workflows first: Use internal tool approval flows as a POC to validate durability and observability value

If You’re Using LangChain/LangGraph

- Workflows is not a replacement but a complementary layer. Consider:

- LangGraph for Agent logic orchestration

- Mistral Workflows for production-grade durability and observability

- Integration between the two via API

If You’re Building Enterprise AI from Scratch

- Include “production-grade orchestration” in your tech evaluation criteria, not just “which model is strongest”

- Mistral’s “model + orchestration” integrated solution reduces integration complexity

- Follow the Temporal community ecosystem—it’s becoming the de facto standard for enterprise orchestration

Summary

The value of Mistral Workflows is not in doing something new (Temporal has existed for years), but in being the first to systematically apply mature microservice orchestration methodology to AI workflows. When enterprise AI shifts from “can we do it” to “can we run it reliably,” this kind of infrastructure-layer capability may matter more than a few percentage points on model benchmarks.

Sources: