Key Findings

Hermes Agent’s memory system has recently sparked heated discussion on X/Twitter (1180+ likes, 271+ retweets, 1509 bookmarks). Its core innovation lies in correcting the systemic deficiencies in memory handling found in OpenClaw and other early Agent frameworks.

If you’ve followed the memory systems of ChatGPT, Claude, or OpenClaw, you know these Agents share a common problem: severe information loss during memory compression. Hermes Agent fundamentally solves this problem by redesigning the memory architecture.

Three-Layer Memory Architecture

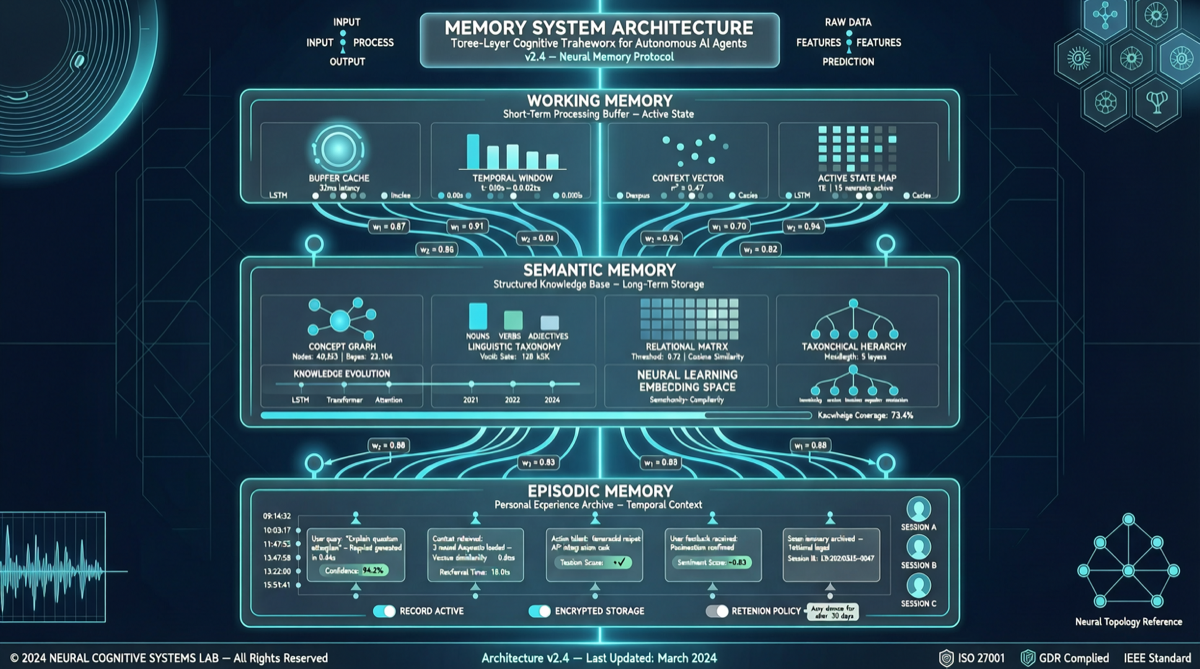

Hermes Agent employs a three-layer memory architecture, each layer handling different time spans and levels of abstraction:

1. Working Memory

- Short-term context for the current conversation window

- Limited capacity but fastest retrieval speed

- Handles immediate task switching and short-term goal maintenance

- Key design: Unlike OpenClaw’s simple truncation, Hermes uses task importance scoring for dynamic retention

2. Semantic Memory

- Long-term accumulated knowledge graph: user preferences, project structures, commonly used tools

- Stored in structured format, supports semantic retrieval

- Key design: Hermes introduces a progressive refinement mechanism — after each conversation, it automatically extracts new semantic knowledge rather than simply appending

- This is precisely what OpenClaw lacks: OpenClaw’s memory is “write-only” — information is stored but rarely actively reviewed and integrated

3. Episodic Memory

- Records specific historical interaction events: “Last time the user asked me to modify function Y in file X”

- Supports timeline retrieval and pattern recognition

- Key design: Hermes combines event tagging + time decay to ensure important historical events aren’t forgotten while reducing the weight of stale information

Three OpenClaw Misconceptions Corrected

Misconception 1: Memory = Context Concatenation

OpenClaw’s memory handling essentially concatenates historical dialogue fragments directly into the current context. This approach has two fatal problems:

- Token consumption grows linearly with conversation length

- Key information is diluted by noise

Hermes’ solution: Through the three-layer architecture, only the most relevant memories are injected into the current context, reducing token consumption by approximately 60%.

Misconception 2: Forgetting = Deletion

OpenClaw’s memory management uses a simple FIFO (First In, First Out) strategy — old conversations are directly discarded.

Hermes’ solution: Forgetting = Degradation. Important information degrades from working memory to semantic memory, then to episodic memory — rather than being directly deleted. Information “temperature” gradually decreases, but it never completely disappears.

Misconception 3: Memory Update = Append-Only Writing

OpenClaw’s memory update is one-way append-only — new memories are added after old ones, with no integration.

Hermes’ solution: Memory consolidation cycle. After each conversation, Hermes triggers a memory organization process: merging duplicate entries, updating outdated information, and establishing new associations. This is analogous to the human “sleep consolidation” process.

The Significance of Local Execution

Ollama 0.21 officially supports Hermes Agent, meaning this memory system can run directly on Mac or Linux locally. This brings two direct benefits:

- Zero trial-and-error cost: Local execution means developers can freely test different memory configurations without incurring API fees

- Data privacy assurance: Memory data is stored entirely locally, not passing through third-party servers

Implications for Agent Developers

The memory design of Hermes Agent sends an important signal: the competitive focus of Agents is shifting from “model capability” to “systems engineering”. The same underlying model, paired with different memory architectures, can produce completely different user experiences.

For developers building Agent applications, the question worth considering is no longer “which model to use,” but rather “how to make the model remember what it should remember.”