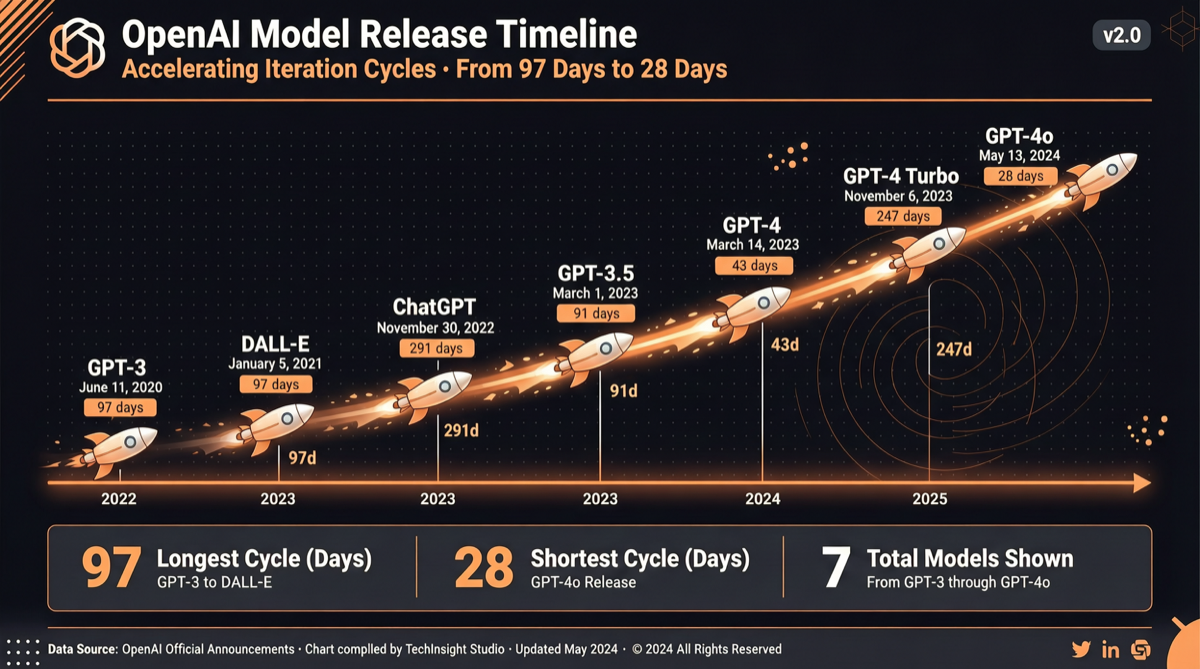

Core Conclusion

OpenAI’s model iteration pace is undergoing unprecedented acceleration. In the 8 months from GPT-5 to GPT-5.5, the interval between versions has been compressed from 97 days to 49 days — cut in half. This is not an accidental optimization but a strategic adjustment driven by competitive pressure.

Release Cycle Data

| Version | Release Date | Interval from Previous | Key Changes |

|---|---|---|---|

| GPT-5 | 2025-08-07 | — | Fifth-generation base model |

| GPT-5.1 | 2025-11-12 | 97 days | Enhanced reasoning capability |

| GPT-5.2 | 2025-12-11 | 29 days | Quick iteration fix |

| GPT-5.3 Codex | 2026-02-05 | 56 days | Coding capability specialization |

| GPT-5.4 | 2026-03-05 | 28 days | Shortest interval |

| GPT-5.5 | 2026-04-23 | 49 days | Strong Terminal-Bench performance |

| GPT-5.6 | Expected mid-June | ~50 days | Upcoming |

Drivers Behind the Cycle Compression

1. Competitive Pressure

- Anthropic released 28 new features in Q1 2026, with Claude Opus 4.7 leading in multiple benchmarks

- Google is preparing Gemini 3.5 Pro, rumored for release around Google I/O (May 19)

- Among Chinese models, GLM 5.1 and Kimi K2.6 have entered the entry tier, closing the gap

2. Infrastructure Maturity

- Training pipeline optimization has lifted iteration speed from “monthly” to “weekly”

- RLHF and Agent RL automation levels have improved

- Standardized evaluation systems have reduced bottlenecks in manual assessment

3. Changing Business Logic

- API revenue models require continuous feature updates to maintain customer stickiness

- Enterprise clients are starting to use “latest model” as a procurement criterion

- The pursuit by open-source models forces closed-source models to maintain iteration speed

Possible Windows for GPT 5.6

Scenario A: Mid-June release (baseline prediction)

- Following the ~50-day interval pattern

- Allows sufficient data collection time for GPT-5.5

Scenario B: Around May 19 Google I/O (accelerated prediction)

- If Google releases Gemini 3.5 Pro, OpenAI may accelerate to capture attention

- This would be a “defensive release” strategy

Scenario C: Around July AMD Advancing AI conference

- Aligned with hardware release schedules

- Showcasing GPT-5.6’s optimized performance on AMD chips

Impact on Developers

Technical level:

- Models are updating more frequently — the “chasing the latest” cost is rising

- Recommend building automated model switching mechanisms rather than manually adapting to each new version

- Watch for API compatibility changes — rapid iteration may introduce breaking changes

Business level:

- If OpenAI releases a new version every 50 days, enterprise procurement decisions need to factor in “how soon will this model become obsolete”

- Consider using model abstraction layers (like Sim, LangChain) to reduce switching costs

- API pricing may adjust with version iterations — monitor cost changes

Industry Landscape Assessment

The compression of model release cycles means “model-as-a-service” competition has evolved from a performance race to a speed race. Whoever can translate research into product faster will gain the upper hand in the developer ecosystem.

Anthropic takes a “quality-first” approach — fewer features but refined. OpenAI takes a “speed-first” approach — rapid iteration, small steps and fast pace. Google takes an “ecosystem integration” approach — embedding model capabilities into existing products like Search, Cloud, and Android.

None of these three strategies is absolutely superior, but the speed strategy’s advantage lies in this reality: in the fast-changing field of AI, speed itself is a moat.

Action Recommendations

- Don’t wait for GPT 5.6: GPT-5.5 is already a mature, production-ready version — start using it now

- Build a model abstraction layer: Use tools like LangChain or LiteLLM to reduce model switching costs

- Monitor API changelogs: Rapid iteration means more breaking changes

- Consider a multi-model strategy: Don’t put all your eggs in one model’s basket — GLM 5.1, Kimi K2.6, and DeepSeek V4 Pro are all strong alternatives