Core Conclusion

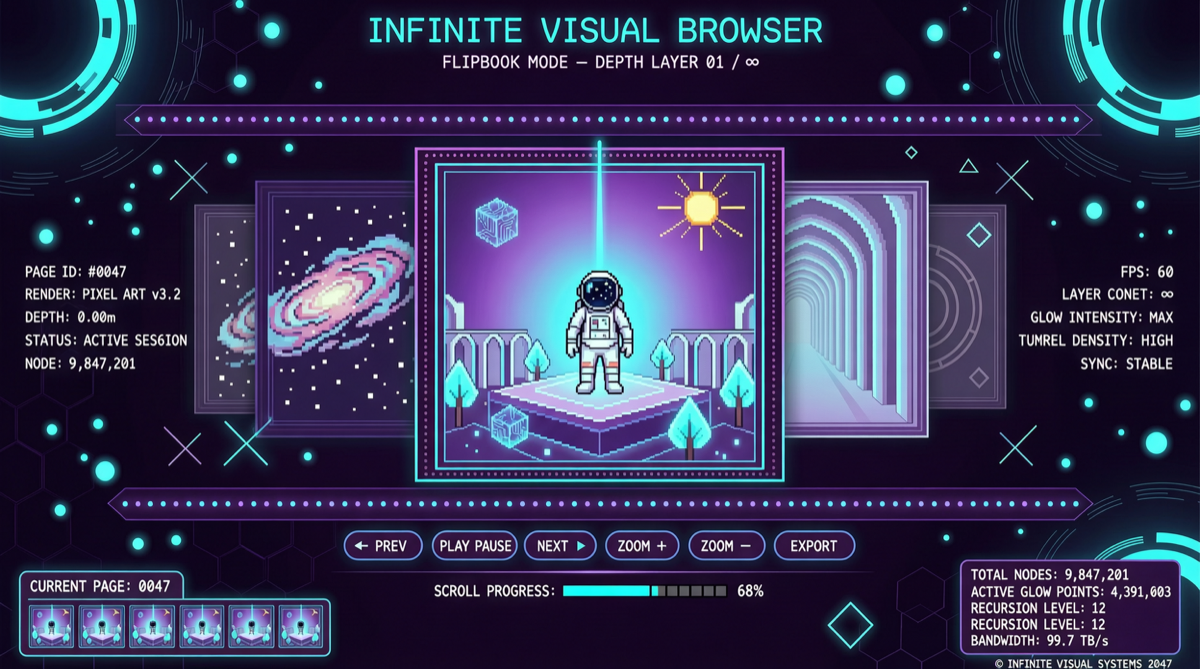

A project called Flipbook is quietly gaining traction in the AI community — it is not another ChatGPT wrapper, but an entirely new way of browsing information:

- Enter a search term, and the system generates a dynamic illustration pixel by pixel in real-time

- Text is also made of pixels, not HTML/CSS

- Any area of the image is clickable, generating the next layer of content

- Like a living encyclopedia — every page turn reveals a newly generated visual

The team behind it is a Samsung engineer (formerly at OpenAI) and two partners. This means talent from big tech is exploring new interaction forms with AI.

What Is This Concept

Imagine the traditional browser experience:

Search → Get search results list → Click link → Open HTML page → Read text/view imagesFlipbook compresses the entire flow into:

Search → Generate visual → Click any area → Generate next layer → Infinite depthThis is not search — this is visual exploration.

Technical Breakdown

Pixel Generation vs HTML Rendering

Traditional web pages use HTML+CSS+JavaScript to build structured pages. Flipbook completely abandons this system:

| Dimension | Traditional Browser | Flipbook |

|---|---|---|

| Rendering | HTML/CSS | Pixel-level image generation |

| Navigation | Hyperlinks | Click any image area |

| Content Form | Text + images mixed | Pure visual illustration |

| Loading | Request-response | Real-time generation |

| Information Density | High (structured) | Low (visual) |

Infinite Layers

The key innovation of Flipbook is that every layer of image is an entry point. Traditional web pages have a linear “article-link-article” structure. Flipbook has a “visual-click-new visual” network structure — theoretically infinitely deep.

This is essentially spatialized information browsing: you are not “turning pages” but “exploring a space.”

Comparison with Existing Solutions

| Project | Core Idea | Technical Route |

|---|---|---|

| Flipbook | Pixel generation + infinite layer visual navigation | Generative model + spatial mapping |

| Lingguang App | 3D world model + immersive exploration | 3D rendering + AI |

| Google Antigravity | 3D knowledge graph visualization | Voice/gesture + 3D rendering |

| Traditional Search | Text list + hyperlinks | HTML/HTTP |

Flipbook’s uniqueness: it does not rely on a 3D engine, voice/gesture — only pixel generation + clicking achieves immersive browsing.

Why It Matters

1. Another Possibility for Interaction Paradigms

AI interaction is not just “conversation” and “agent execution.” Flipbook demonstrates visual exploration interaction — users do not need to input precise questions, just click areas of interest.

2. Signal of Ex-OpenAI Talent Flow

The team member comes from OpenAI, now working on this project at Samsung. This reflects two trends:

- Big tech AI talent is moving toward hardware + AI intersection

- Samsung’s layout in AI wearables is not just hardware, but also interaction layer innovation

3. Open Source / Community Potential

Though Flipbook is currently a proof of concept, its open source potential is high. If released, it could spawn a wave of “visual browser” derivative projects.

Limitations and Challenges

- Low information density: Pure visual images are not suitable for precise information retrieval (like data lookup, code reading)

- Poor controllability: Users cannot precisely control generated content, high randomness

- Performance bottleneck: Real-time pixel generation demands high computing power, mobile experience unverified

Action Recommendations

- Designers / interaction researchers: This is a new experiment in information architecture for the AI era, worth tracking

- AI product teams: Flipbook proves there is still vast exploration space for “non-conversational AI interaction”

- General users: Currently more of a concept demo, practical usability remains to be seen

Flipbook represents an overlooked possibility: AI does not have to be a chat box — it can be a door to an infinite visual world.