Core Data

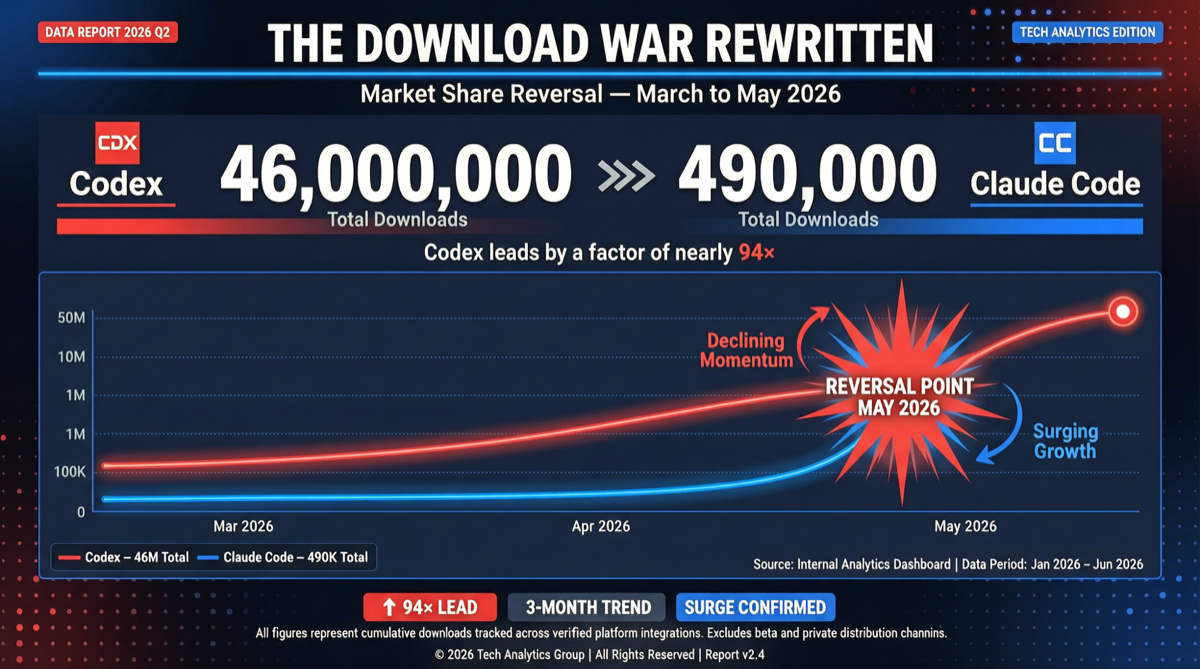

46 million vs 490 thousand.

A gap of nearly 100 times.

This is the latest weekly download comparison between OpenAI’s Codex and Anthropic’s Claude Code in early May 2026.

Just weeks ago, the situation was completely reversed:

- Through March and April, Claude Code was consistently at tens of millions of weekly downloads

- Codex was only at around 3 million, firmly suppressed

The crossover happened on April 30, 2026. That day, the two curves crossed.

Timeline

| Time | Claude Code Weekly | Codex Weekly | Key Event |

|---|---|---|---|

| Early March | ~12M | ~3M | Claude Code continues to lead |

| Mid-March | ~15M | ~5M | Claude Code momentum strong |

| Early April | ~10M | ~8M | Gap begins narrowing |

| April 30 | — | — | Crossover point |

| Early May | ~490K | ~46M | Codex surges 100x ahead |

Why the Reversal Happened

Based on multiple developer community feedback sources, the reversal can be attributed to three dimensions:

1. GPT-5.5’s Coding Capability Leap

Multiple developers shared their experiences on X:

“Same project. Claude Code + Opus 4.7 feels like it’s phoning it in — fix A, break B, wasting tokens and time going back and forth. Switch to GPT 5.5, and most of the time it gets the feature right on the first try, no rework.”

GPT-5.5’s performance on complex refactoring, debugging, and real project tasks is clearly superior to Opus 4.7. This is a hard capability gap that ecosystem or pricing can’t compensate for.

2. Claude Code’s Strategic Wobble

Anthropic went through multiple adjustments to Claude Code’s pricing and strategy in the first half of 2026:

- Removed Claude Code from the $20 Pro plan

- Later reinstated the Pro plan

- Pricing instability drove some developers away

3. Codex’s Ecosystem Integration

Codex isn’t just a CLI tool. It offers:

- CLI (command line)

- App (desktop application)

- Web (web version)

- IDE plugins (VS Code, JetBrains)

Meanwhile, Codex’s integration with open-source tools like OpenClaw and OpenClaude further lowered the barrier to entry.

Landscape Assessment

This reversal isn’t just about download numbers — it reflects a deeper trend: AI coding tool competition is shifting from “feature race” to “experience race.”

| Dimension | Claude Code | Codex |

|---|---|---|

| Model capability | Opus 4.7 | GPT-5.5 |

| Pricing strategy | Multiple adjustments | Relatively stable |

| Deployment | CLI-focused | CLI + App + Web + IDE |

| Open-source ecosystem | Limited | OpenClaw/OpenClaude etc. |

| Developer community feedback | ”Phoning it in" | "Gets it right first time” |

Action Recommendations

If you’re choosing an AI coding tool:

- Choose Codex: If you want quality of task completion, need multiple deployment options, or already have a ChatGPT subscription

- Choose Claude Code: If you’re deeply embedded in the Anthropic ecosystem, or have hard requirements for Claude’s security and controllability

- Use both: Opus 4.7 for planning and review, GPT-5.5 for execution — dual-model workflows are becoming best practice

Bottom line: 46 million vs 490K isn’t the endpoint — it’s a marker that AI coding tool competition has entered a new phase where model capability and developer experience are replacing marketing and ecosystem lock-in as the deciding factors.