Core Discovery

cocoindex-io/cocoindex hit GitHub Trending Python榜单 this week, gaining 8,000+ stars. The project’s positioning is unique: it’s not another Agent orchestration framework, but an incremental computing engine specifically designed for long-running Agent tasks.

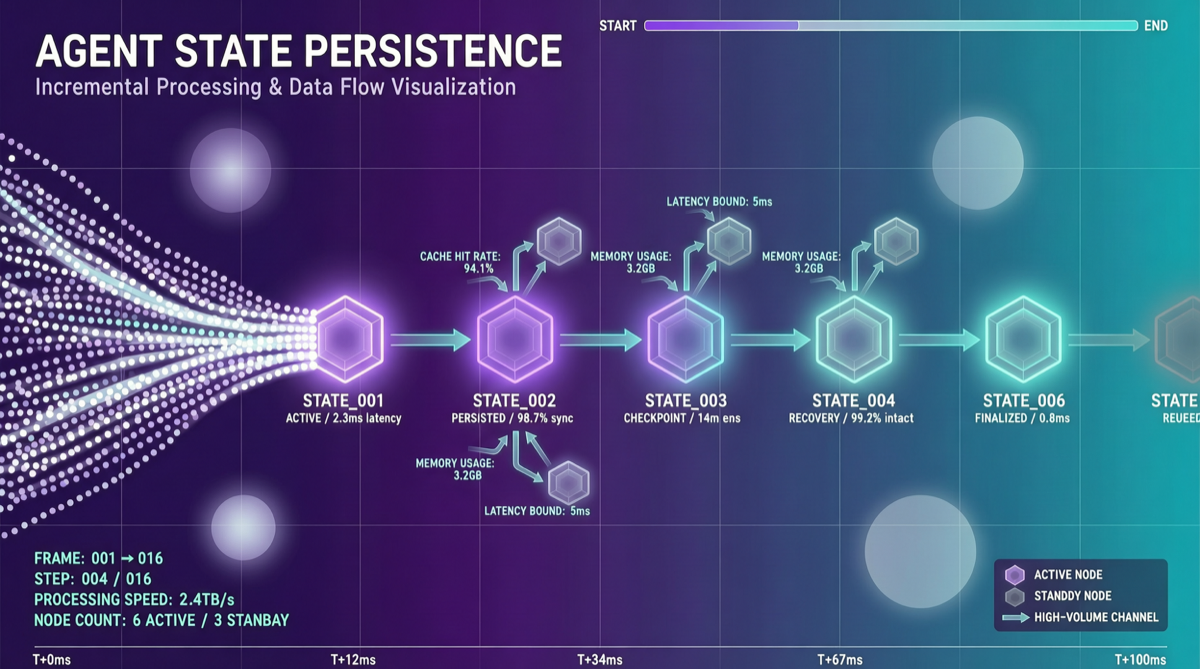

The project’s tagline directly addresses the pain point: “Incremental engine for long horizon agents” — solving Agent state persistence and incremental updates over extended time spans.

Why Long-Horizon Agents Are Hard

Current Agent frameworks (LangChain, CrewAI, AutoGen, etc.) perform well on short-cycle tasks (Q&A or simple tool calls within minutes), but face three core challenges in long-cycle scenarios:

Challenge 1: Context Loss

After an Agent runs for 30 minutes, the LLM’s context window may already be filled with intermediate results. The traditional approach is to truncate or summarize conversation history, but this leads to irreversible loss of critical information.

Challenge 2: Irrecoverable State

If the Agent process is interrupted due to network disconnection, server restart, or Token exhaustion, the entire reasoning state is lost and must start from scratch.

Challenge 3: Redundant Computation

Long-cycle tasks typically involve repeated queries and analysis of the same dataset. Without incremental caching, Agents will repeatedly execute the same sub-tasks, wasting Tokens and time.

cocoindex’s Solution

cocoindex’s core approach borrows the incremental computing paradigm from database and stream processing:

| Concept | Traditional Agent | cocoindex Agent |

|---|---|---|

| State Management | In-memory conversation history | Persisted incremental state tree |

| Interruption Recovery | Loses all state | Recovers from latest checkpoint |

| Redundant Computation | Re-executes every time | Incremental updates, only processes changes |

| Data Pipeline | Hardcoded within Agent | Declarative pipeline definitions |

Key Architecture Features

- Declarative Pipelines: Define data processing flows in Python code, cocoindex automatically tracks dependencies

- Incremental Execution: Only related steps re-execute when input data changes

- State Persistence: Agent intermediate states can persist to disk, supporting cross-session recovery

- Long-Context Friendly: Through incremental state trees, Agents don’t need to load entire history into LLM context

Typical Use Cases

| Scenario | Traditional Approach Problem | cocoindex Advantage |

|---|---|---|

| Continuous code review | Each PR review starts from empty state | Maintains incremental understanding of codebase, new changes only analyze diffs |

| Data pipeline monitoring | Periodic full data quality checks | Incremental monitoring, only processes new/changed data |

| Long-cycle research tasks | Hours-long research sessions lose progress on interruption | State persistence, can pause and resume anytime |

| Continuous knowledge base updates | Full rebuild indexing is costly | Incremental index updates, only processes new content |

Relationship with Existing Frameworks

cocoindex is not a replacement for LangChain or CrewAI, but a 底层引擎:

┌─────────────────────────────────────┐

│ LangChain / CrewAI (Orchestration Layer) │

│ Define Agent roles, tasks, workflows │

├─────────────────────────────────────┤

│ cocoindex (Incremental Engine Layer) │

│ State persistence, incremental computing │

│ Recovery checkpoints │

├─────────────────────────────────────┤

│ LLM API (Model Layer) │

│ GPT-5.5 / Claude / Qwen etc. │

└─────────────────────────────────────┘This layered architecture allows cocoindex to work with any Agent framework — it solves infrastructure problems that framework layers don’t care about.

Landscape Assessment

Long-horizon Agents are one of the key trends of 2026. As Agents evolve from “Q&A assistants” to “autonomous workers” (writing code, doing research, managing projects), the ability to run for extended periods has shifted from a nice-to-have to a necessity.

cocoindex’s emergence signals that Agent infrastructure is moving from the “rapid prototyping” phase to the “production-ready” phase. Incremental computing, state persistence, checkpoint recovery — these are technologies mature in database and stream processing domains, now being introduced into the Agent ecosystem.

Action Items

- Evaluate whether your Agent needs long-horizon capability: If your Agent runs longer than 10 minutes or needs to work across multiple sessions, cocoindex deserves evaluation

- Integration testing with existing frameworks: If you’re already using LangChain/CrewAI, try introducing cocoindex for incremental state management in part of your pipeline first, observe results

- Pay attention to checkpoint strategy: cocoindex’s effectiveness largely depends on checkpoint frequency and granularity — too frequent slows performance, too sparse increases recovery cost