What Happened

In 2026, when Agent frameworks like LangChain, CrewAI, and AutoGen are already highly mature, a counter-intuitive open-source tutorial is taking off on GitHub: it teaches developers to build AI agents from scratch without any frameworks.

The project has gained 1,500+ stars, and community feedback reveals a growing unease among developers about framework “black boxing” — when frameworks handle tool calling, state management, and multi-agent coordination for you, you actually don’t understand what your agent is doing or how to debug it when things break.

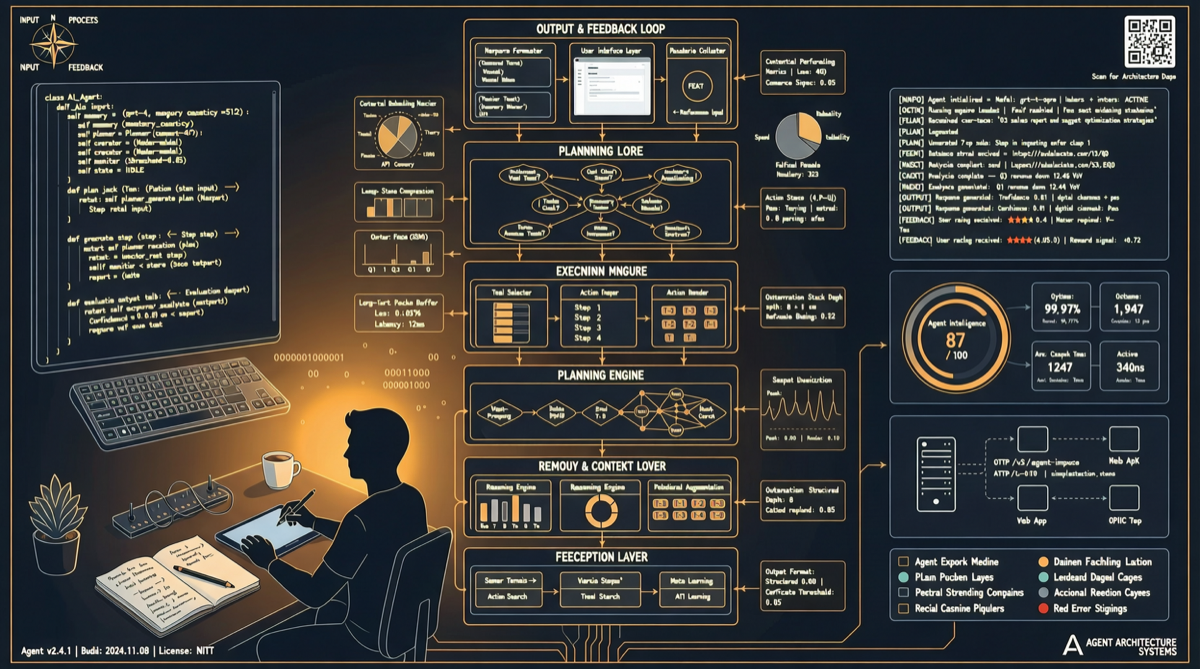

Progressive Architecture of the Tutorial

The tutorial breaks Agent development into clear phases, introducing only one new concept per stage:

Phase 1: Single Agent Fundamentals (Steps 0-6)

| Step | Content | Core Knowledge |

|---|---|---|

| 0 | Basic chat loop | LLM API calls, message formats |

| 1 | System Prompt engineering | Role definition, behavioral constraints |

| 2 | Tool definition and calling | Function calling schema |

| 3 | Tool execution layer | Parsing LLM output, executing tools, handling results |

| 4 | Loop control | Tool call → result feedback → next round loop logic |

| 5 | Error handling | Retry and fallback on tool call failures |

| 6 | Context management | Token counting, context truncation strategies |

Phase 2: Memory System (Steps 7-10)

Introduces short-term memory (conversation history) and long-term memory (vector storage) implementation. The tutorial demonstrates how to build a RAG pipeline without framework dependencies — from text chunking and vectorization to similarity retrieval, every step is transparent.

Phase 3: Multi-Agent Coordination (Steps 11+)

The advanced section shows how to scale from single Agent to multi-Agent systems:

- Role assignment: Different Agents take different responsibilities (planner, executor, reviewer)

- Message passing: Communication protocol design between Agents

- Conflict resolution: Arbitration mechanisms when multiple Agents produce contradictory outputs

Why “No Framework” Is Going Viral

The Hidden Costs of Frameworks

The 2026 Agent framework ecosystem is highly mature, but maturity has brought problems:

- Debugging difficulty: When LangChain’s Chain errors out, the error message often points to the framework internals rather than your logic

- Performance overhead: Framework abstraction layers add unnecessary serialization/deserialization costs

- Vendor lock-in: Systems built on specific frameworks have extremely high migration costs

- Learning curve: Mastering LangChain/CrewAI DSL syntax is itself a skill investment

Three Irreplaceable Values of Hand-Coding Agents

| Value | Description |

|---|---|

| Full Control | You know what every line of code does; debugging doesn’t require guessing framework behavior |

| Optimal Performance | No framework overhead; can be极致 optimized for specific scenarios |

| Architectural Flexibility | Not constrained by framework design philosophy; can mix and match different patterns |

Framework vs. Hand-Coding Decision Matrix

| Scenario | Recommended Approach | Reason |

|---|---|---|

| PoC / Rapid validation | LangChain / CrewAI | Fast development speed, flexible prototype iteration |

| Production environment | Hand-code core + framework assist | Core paths controllable, edge functions use frameworks |

| High-concurrency services | Hand-code | Framework performance overhead unacceptable |

| Multi-Agent complex orchestration | CrewAI + custom | Multi-Agent orchestration hand-coding cost too high |

| Learning/teaching | From scratch | Best path to understanding底层原理 |

How to Use This

This tutorial is best suited for two types of developers:

- Developers who have used LangChain/CrewAI but want to understand底层原理: Use the tutorial as a “reverse engineering” reference to understand what frameworks are actually doing underneath

- Engineers building high-performance Agent systems for production: Based on the tutorial’s architecture, customize and optimize for your specific business scenarios

A practical recommendation: Don’t choose one or the other. Use frameworks for rapid prototyping to validate ideas, then rewrite core paths using the tutorial’s approach once the solution is proven, keeping frameworks only for non-critical auxiliary functions.