AI can't pretend to be human anymore. At least not in the Chinese market — from July 15, this red line officially takes effect.

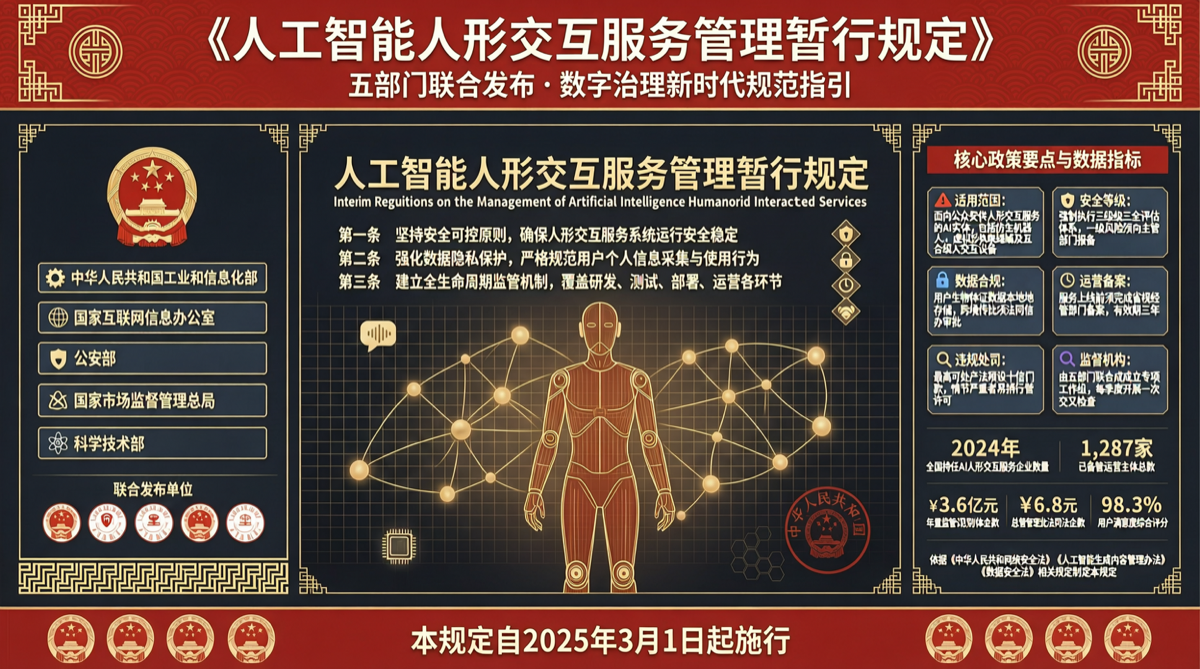

On April 10, five departments — the Cyberspace Administration of China, the National Development and Reform Commission, the Ministry of Industry and Information Technology, the Ministry of Public Security, and the State Administration for Market Regulation — jointly published the "Interim Measures for the Management of AI Humanoid Interaction Services." This is one of the few specialized regulations worldwide specifically targeting AI humanoid behavior.

Core Requirements

The regulation boils down to three core requirements:

First, identity labeling. AI service platforms must inform users in a prominent manner that they are interacting with AI, and must not conceal or obscure AI identity. Chatbots that deliberately mimic human tone must reveal their identity before the conversation begins.

Second, behavioral boundaries. AI services must not use humanoid interaction to commit fraud, mislead, or otherwise harm user interests. "Talking like a human" is fine, but "pretending to be human to deceive" is not.

Third, accountability. Service providers bear management responsibility for their AI's humanoid interaction behavior. When things go wrong, "the AI said it on its own" is no longer an acceptable excuse.

Why Now

The timing isn't surprising. In the first half of 2026, AI chatbots, virtual companions, and AI digital human services have proliferated in China. Some products have blurred the line between "tool" and "companion" — cases where users couldn't tell if they were talking to a human or AI are not uncommon.

From a regulatory perspective, the risks of humanoid AI aren't just technical — they're social and psychological. When a user develops emotional dependence on an AI that can simulate human emotion without restriction, this is no longer a UX problem.

Industry Impact

Short-term, the biggest impact falls on:

- AI virtual companions and emotional support products. Their core selling point is "humanoid interaction," and the new rules require them to do proper identity labeling.

- AI customer service systems. Many corporate AI chatbots are already nearly indistinguishable from human agents and will need clear AI identification.

- Digital humans and virtual hosts. While virtual hosts usually don't "hide their identity," interactive segments with potential for user deception will need adjustments.

Long-term, this regulation may push the industry toward a new balance between "humanoid-ness" and "transparency" — not banning AI from being human-like, but requiring that human-likeness happen transparently.

International Comparison

The EU AI Act also has transparency obligations, but focuses more on risk classification. China's approach is notable for being a specialized regulation directly targeting "humanoid interaction" as a specific behavior. Globally, this kind of targeted AI regulation is still rare.

Main sources:

- Joint announcement from five departments

- Sohu Tech analysis