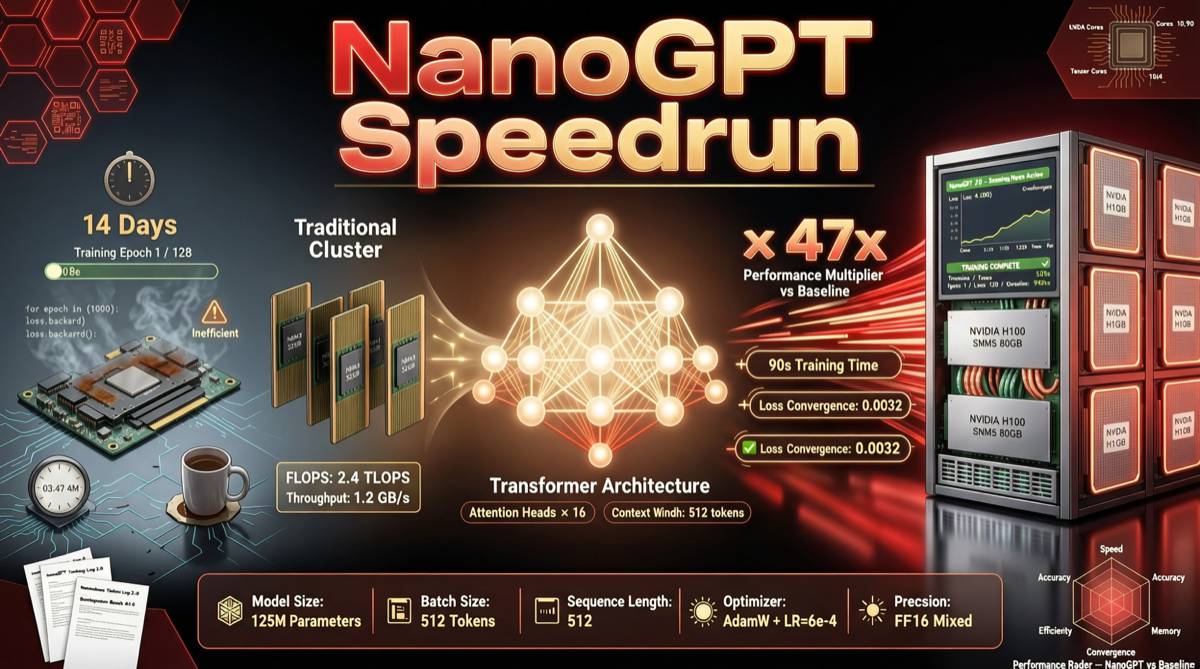

Andrej Karpathy’s llm.c trained a 124M parameter GPT-2 model on 8 H100 GPUs in 45 minutes, consuming 10 billion tokens.

Modded-NanoGPT (github.com/KellerJordan/modded-nanogpt) compresses this to 90 seconds using under 400M tokens — a 30x speedup, 25x token efficiency improvement. This isn’t a big company’s result but a collaborative open-source speedrun by dozens of global researchers.

What It Did

A collaborative challenge: train a 124M parameter model to 3.28 loss on FineWeb, as fast as possible on 8 H100s. The leap from 45 minutes to 90 seconds comes from stacking dozens of training algorithm optimizations:

- Rotary embeddings, QK-Norm, ReLU²

- Muon optimizer

- FP8 matmul for head layer

- Flash Attention 3 with long-short sliding window

- Skip connections from embedding to every block

- Gradient accumulation, batch size scheduling, and more

Why It Matters

This isn’t a production framework but a training algorithm playground. It proves:

- Small models can be trained efficiently — algorithmic optimization has huge returns

- Open collaboration works — contributors from around the world

- Reproducible benchmarks — clear target, anyone can verify

Quick Start

git clone https://github.com/KellerJordan/modded-nanogpt.git && cd modded-nanogpt

pip install -r requirements.txt

python data/cached_fineweb10B.py 9

./run.shOfficial validation on 8 H100 GPUs (sponsored by PrimeIntellect). First torch.compile has ~7 min latency.