SenseNova U1: From “Plugin Assembly” to “Native Unity”

On April 29, SenseTime officially released its next-generation flagship model SenseNova U1, positioned as a “native unified understanding-generation model.” This marks a shift in Chinese large models from “plugin-style AI” toward “native unified architecture.”

What Is a Unified Model?

Previous multimodal AI systems essentially “stitched” together visual encoders, language models, and image generators—understand first, then call different tools, with information loss and latency in between.

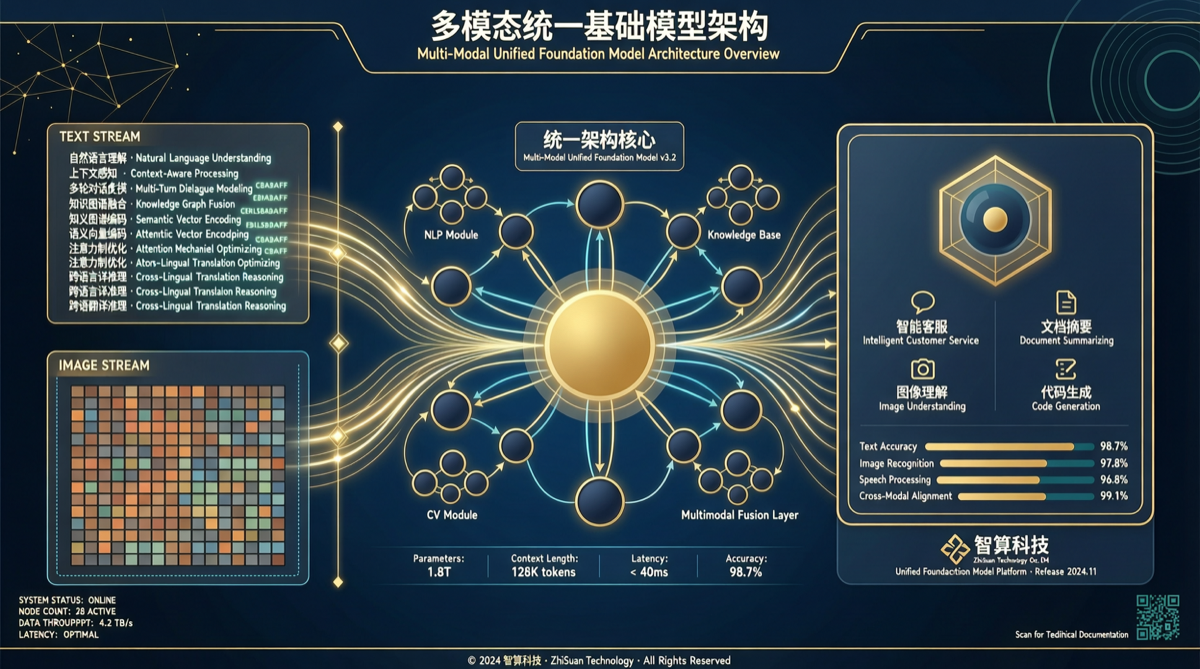

SenseNova U1’s core breakthrough lies in shared underlying representations for both understanding and generation:

- Unified representation space: Text, images, and video are encoded and decoded in the same semantic space

- End-to-end training: No longer requires separate visual encoders or image decoders—one model handles everything

- Native multimodality: Understanding images and generating images are not two separate processes but different output modes of the same model

Open Source at SOTA

SenseTime chose to open-source U1, and the open-source version directly achieves SOTA performance. This is unusual in Chinese large model history—most previous open-source versions were “lite” or “distilled” editions with performance gaps compared to closed-source flagships.

The open-source strategy sends a clear signal: SenseTime believes ecosystem impact outweighs closed-source moats.

Why Architectural Unity Matters

For developers and enterprise users, the practical benefits of a unified architecture are direct:

- Lower latency: No need to switch between multiple models, reducing context transfer overhead

- Improved consistency: Understanding and generation use the same representation, avoiding the “understood correctly but generated off-target” problem

- Simplified deployment: Only one model service to maintain, not an orchestration system of multiple models

Positioning Against Competing Models

In the current Chinese large model landscape, SenseNova U1’s unique positioning stands out:

| Model | Core Positioning | Open Source Status |

|---|---|---|

| GLM-5.1 | All-around assistant | Partially open |

| Kimi K2.6 | Coding / long context | Open source |

| DeepSeek V4 | Reasoning / cost-effectiveness | Open source |

| Qwen 3.6 | All-scenario | Partially open |

| SenseNova U1 | Understanding-generation unity | Fully open |

| MiMo-V2.5 | Code / multimodal Agent | Open source |

SenseNova U1 isn’t doing addition with an “all-capable model”—it’s doing subtraction with “unified architecture,” replacing multi-model orchestration with a single model.

Industry Implications

The release of SenseNova U1 carries several noteworthy signals:

- Architectural innovation: Competition is shifting from “model capability” to “architectural paradigm”—unified understanding and generation could be the next technical watershed

- Open source at SOTA: Demonstrates SenseTime’s confidence in its technical capabilities and accelerates the evolution of the Chinese open-source ecosystem

- Full platform coverage: Already covers Mac/Windows/iOS/Android/HarmonyOS, with batch access via application system

Conclusion

Late April 2026 has seen a wave of Chinese large model releases: GLM-5.1, Kimi K2.6, DeepSeek V4, and Qwen 3.6, each with different focus areas. SenseNova U1 brings a different technical route—not competing on who has more parameters or higher benchmarks, but on whose architecture is more unified and elegant.

This may signal that large model competition has entered “deep waters”: marginal returns from parameters and benchmarks are diminishing, while architectural innovation is becoming the new competitive frontier.