Key Conclusion

ModelBest’s MiMo V2.5 Pro, with its 1T MoE architecture and up to 1M token context window, scores 54 on the Intelligence Index, tying with Moonshot’s Kimi K2.6 at the top of Chinese open-source models. Compared globally, GPT-5.5 scores 60, while Gemini and Claude series score 57.

This is not a “more parameters = better” story, but a signal that Chinese models are pushing forward simultaneously on MoE architecture efficiency and ultra-long context dimensions.

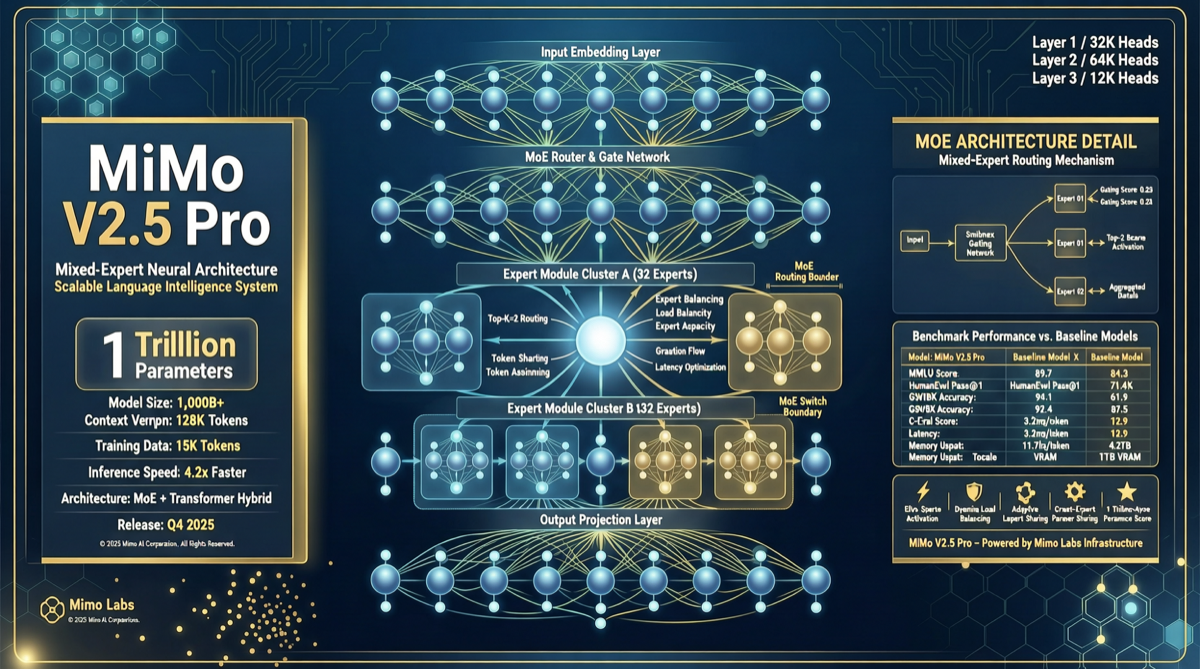

MiMo V2.5 Pro Key Data

| Dimension | MiMo V2.5 Pro | Kimi K2.6 | DeepSeek V4 Pro | GPT-5.5 |

|---|---|---|---|---|

| Intelligence Index | 54 | 54 | 52 | 60 |

| Total Parameters | 1T (MoE) | 1T (MoE) | 1.6T (MoE) | Unknown (closed) |

| Active Parameters | ~54B | Unknown | 49B | Unknown |

| Max Context | 1M tokens | 1M tokens | 1M tokens | Unknown |

| Open Status | Open Weights | Open Weights | Open Weights | Closed |

| Vendor | ModelBest | Moonshot | DeepSeek | OpenAI |

Key signal: MiMo V2.5 Pro’s Intelligence Index score ties Kimi K2.6 and exceeds DeepSeek V4 Pro (52). With lower total parameters than DeepSeek V4 Pro but a higher score, the active parameter efficiency may represent an architectural breakthrough.

ModelBest’s Strategic Path

ModelBest has taken a different path from Baidu, Alibaba, and ByteDance in the Chinese model landscape:

- Not competing on absolute parameter scale: 1T MoE instead of 1.6T, achieving equal or higher Intelligence Index scores with fewer total parameters

- Pushing context windows: 1M token context directly competes with Kimi K2.6 and DeepSeek V4 Pro for long document analysis and codebase understanding

- Open Weights strategy: Only 6 points behind closed-source GPT-5.5 (60), but open weights allow the community to deploy and fine-tune on consumer hardware

Why This Ranking Matters

- MoE efficiency race escalates: When DeepSeek scores 52 with 1.6T total / 49B active parameters, and MiMo scores 54 with 1T total / 54B active parameters, active parameter efficiency becomes the new competitive frontier

- 1M context is no longer Kimi-exclusive: Both MiMo V2.5 Pro and DeepSeek V4 Pro push context windows to 1M tokens, leveling the playing field for long-text processing

- Open vs closed-source gap narrows to 6 points: MiMo (54) vs GPT-5.5 (60) — for scenarios that don’t require absolute peak performance, the cost-benefit ratio of open-source solutions grows increasingly attractive

Action Recommendations

- Long document analysis: MiMo V2.5 Pro’s 1M context makes it suitable for hundred-page legal/financial documents, competing directly with Kimi K2.6

- Consumer GPU deployment: 54B active parameters means Q4 quantization can run on 48GB VRAM (dual RTX 4090 or A6000), suitable for local deployment needs

- vs DeepSeek V4 Pro selection: For lower API costs, DeepSeek V4 Pro’s limited-time 2.5x discount remains the best choice; for open-source ecosystem and fine-tuning capabilities, MiMo V2.5 Pro deserves testing

- Watch ModelBest’s next moves: As an open-weights model, community fine-tuned versions of MiMo V2.5 Pro may surpass the original score in specific domains (legal, medical)

MiMo V2.5 Pro’s breakthrough shows that Chinese model competition has moved beyond “whose parameters are bigger” into “whose architecture is more efficient.” The next key variable: who can first prove MoE architecture’s practical advantage in Agent scenarios (multi-step reasoning + tool calling).