Core Data

| Metric | Kimi K2.5 | Comparison |

|---|---|---|

| Total Parameters | 1 trillion | GPT-5.5 est. ~10T |

| Active Parameters | 32 billion | Only 3.2% active |

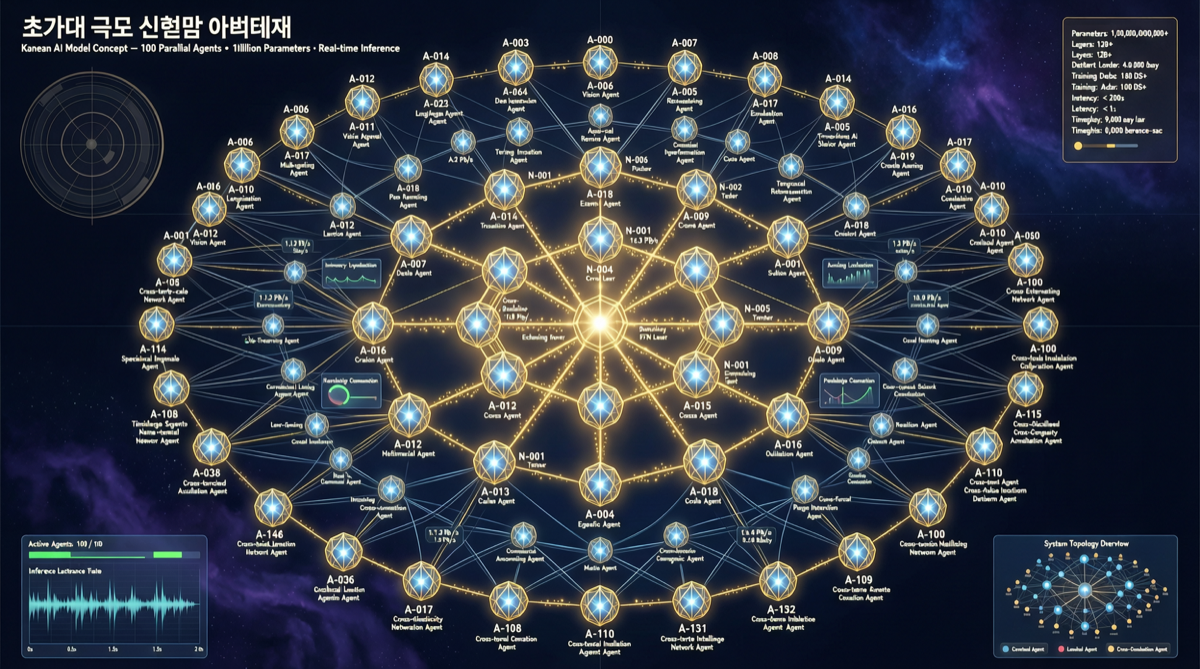

| Sub-Agent Coordination | Up to 100 parallel | Industry typical 5-10 |

| Modalities | Text + Image + Video native | Comparable to GPT-5.5 |

| Open-Source | Weights open | Like LLaMA series |

| Inference Cost | 1/7 of Claude | Extremely cost-effective |

MoE Architecture Significance

MoE (Mixture of Experts) isn’t new, but achieving 1 trillion total parameters while keeping active parameters at 32 billion requires:

- Efficient routing: Each token activates only the most relevant experts

- Expert load balancing: Preventing some experts from being overloaded

- Inference memory management: 1 trillion parameters need to be loaded, but only 32B compute

100 Sub-Agents Parallel — What Does This Mean?

Kimi K2.5 can coordinate up to 100 AI sub-agents in parallel within a single request. This isn’t simple “batch calling” — it’s internal multi-threaded inference.

Example scenario: analyzing a 500-page financial report. K2.5 dispatches 100 sub-agents simultaneously for data extraction, industry comparison, risk identification, and more — all running in parallel, then integrated by the routing layer.

Comparison with Existing Solutions

| Approach | Agents | External Framework Needed | Cost |

|---|---|---|---|

| LangChain + GPT-4 | 5-10 | Yes | High |

| CrewAI + Claude | 5-20 | Yes | Medium-high |

| Kimi K2.5 Built-in | 100 | No | Low |

Key advantage: Multi-agent capability is built into the model, eliminating external orchestration complexity.

Open-Source Significance

Kimi K2.5 is open-source. Against the backdrop of Meta Muse Spark going closed-source and Anthropic locking its models, Kimi K2.5’s open strategy stands out.

Landscape Assessment

Kimi K2.5 represents a trend: models are evolving from “single-thread inference engines” to “multi-agent coordination systems.”

In this trend, traditional Agent frameworks (LangChain, CrewAI) may gradually be replaced by models’ built-in multi-agent capabilities.

Action Recommendations

- Developers: Try Kimi K2.5 API for multi-step parallel inference scenarios

- Enterprises: Evaluate migrating LangChain/CrewAI workloads to Kimi K2.5 built-in multi-agent

- Researchers: Study Kimi K2.5’s MoE routing mechanism from open-source weights