A feature announcement from Google at Cloud Next has ended a debate most enterprises didn’t even realize they were participating in: what protocol should AI agents use to connect to external tools?

What Happened

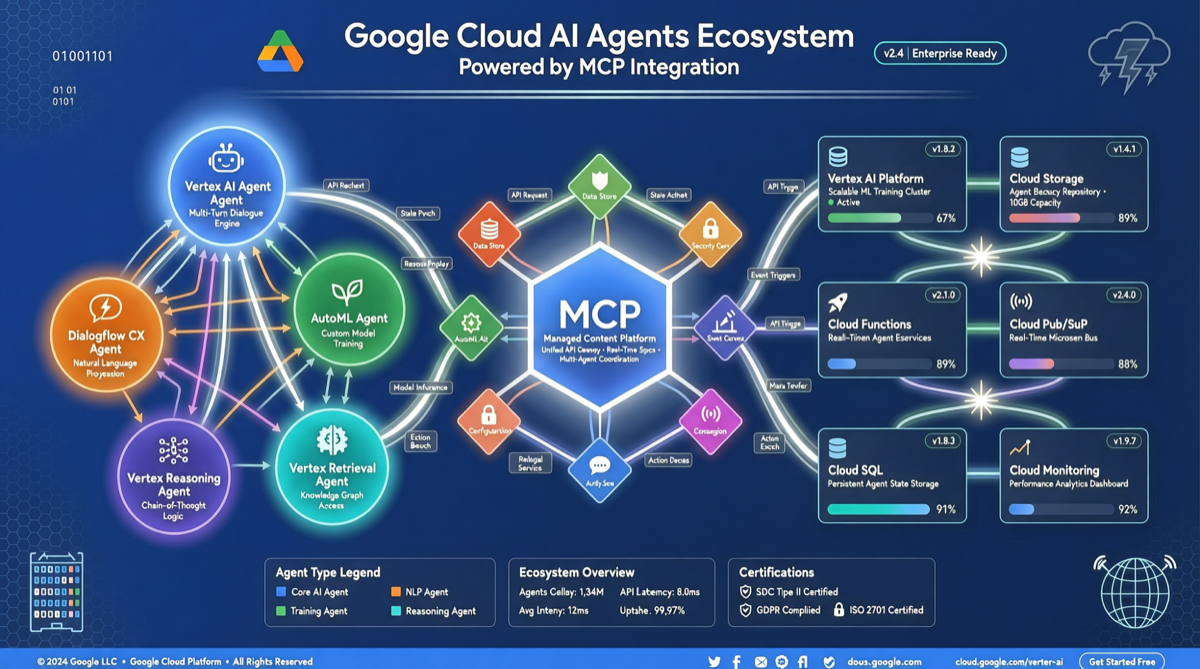

Google announced that its Cloud platform now supports “Bring Your Own MCP” (BYO MCP), allowing enterprises to connect their self-deployed MCP Servers directly to Google’s AI models and services. This means:

- Gemini models can call internal databases, APIs, and business systems through MCP

- Enterprises no longer need to develop separate adapters for Google AI; existing MCP Servers can be reused directly

- Google shifted from “only supporting its own protocol” to “embracing industry standards”

Background: Three Protocols Six Months Ago

Half a year ago, the AI agent tool integration space had three major competitors:

| Protocol | Origin | Positioning |

|---|---|---|

| MCP | Anthropic | Open agent tool protocol |

| Function Calling | OpenAI | Dedicated function calling mechanism for GPT models |

| Vendor-specific protocols | Various AI platforms | Used within closed ecosystems |

The situation then: if you used Anthropic’s models, you went with MCP; if you used OpenAI’s models, you used Function Calling; other platforms had their own approaches. Enterprises using multiple model providers had to maintain multiple sets of tool integration code.

Google’s BYO MCP announcement is essentially an endorsement of MCP as the industry standard.

What It Means for Enterprises

1. Reduced Duplicate Development

No more writing separate tool integration code for each AI model provider. One MCP Server can serve Claude, Gemini, and any model that supports MCP.

2. Preserved Vendor Choice

When the tool integration layer is standardized, the cost of switching model providers drops significantly. Use Gemini + your MCP today, switch to Claude + the same MCP tomorrow, without changing integration code.

3. Lowered Barrier for AI-Enabling Internal Systems

Many enterprises have internal systems (ERP, HR, finance) that need AI agent access. BYO MCP means developing an MCP Server once for these systems, usable across multiple AI platforms.

Getting Started

To try BYO MCP on Google Cloud:

# 1. Deploy your MCP Server (filesystem example)

npx @modelcontextprotocol/server-filesystem /allowed/path

# 2. Register the MCP Server in Google Cloud AI Platform

# Configure via Google Cloud Console or API

# Specify the MCP Server endpoint and authentication method

# 3. Call from Gemini applications

# The model will discover and call your registered tools through MCPRefer to Google Cloud’s official BYO MCP documentation for detailed configuration steps.

Points to Note

- Security boundaries: BYO MCP means enterprises manage their own MCP Server security policies. Google provides the integration framework, but tool call permission control is the user’s responsibility

- Performance overhead: MCP is a protocol layer that adds serialization/deserialization overhead compared to native API calls; latency-sensitive scenarios need evaluation

- Version compatibility: The MCP protocol is still evolving; monitor version upgrades’ impact on existing Servers

Landscape Assessment

With Google joining the MCP ecosystem and Anthropic’s continued push, MCP’s position as the AI agent tool integration standard is quite solid. For enterprise AI teams, now is a good window to learn and deploy MCP — the standard is converging, but the tool ecosystem is still rapidly expanding.

What to watch next: whether OpenAI will officially embrace MCP, whether more SaaS vendors will release official MCP Servers, and MCP’s maturity in enterprise-grade security auditing.

Primary sources:

- Google Cloud Next official announcements

- Google Cloud AI Platform documentation

- MCP protocol official specification