Verdict

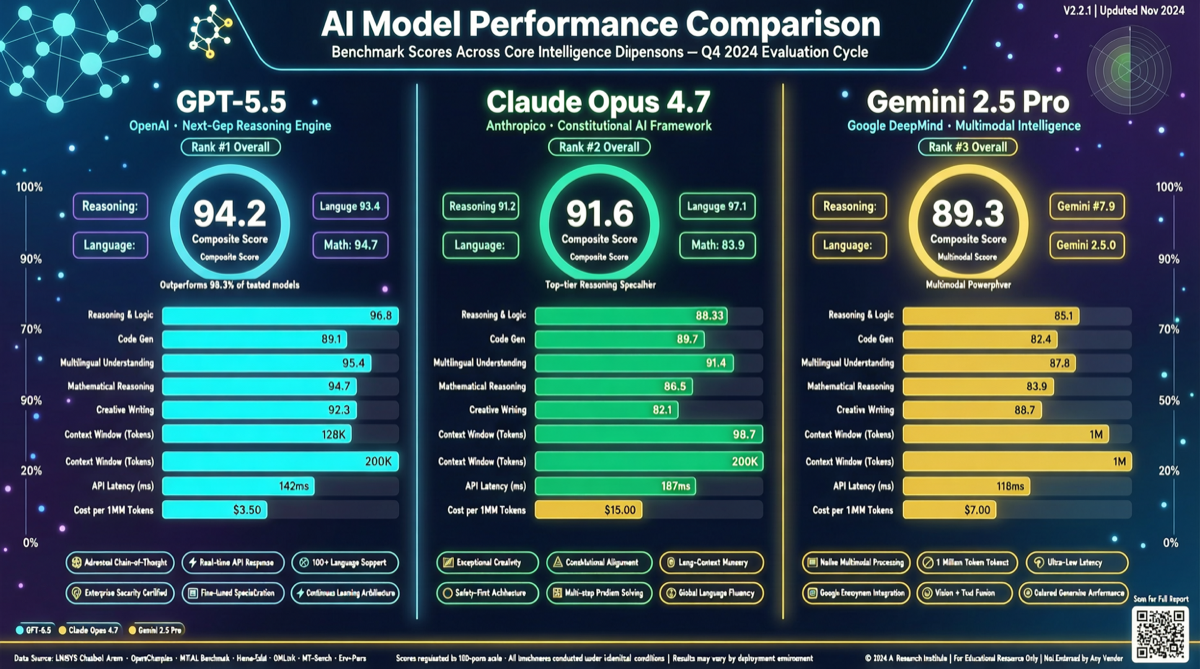

GPT-5.5 leads in coding and agentic workflows, Claude Opus 4.7 maintains its edge in software engineering tasks, and Gemini 2.5 Pro delivers near-frontier capabilities at dramatically lower API costs. There is no “best” — only “best for your task.”

Choose GPT-5.5 for end-to-end agent orchestration with the fewest retries; Claude Opus 4.7 for large-scale codebase refactoring where its SWE-bench Pro lead matters; Gemini 2.5 Pro for cost-sensitive batch tasks where its price advantage is overwhelming.

Test Dimensions

Coding Ability

On SWE-bench Pro (real GitHub issue resolution), Claude Opus 4.7 leads at 64.3%, with GPT-5.5 at 58.6%. However, OpenAI noted that some issues in Anthropic’s report may show signs of training data memorization. On Terminal-Bench 2.0 (complex command-line workflows), GPT-5.5 reaches 82.7%, significantly ahead of all competitors.

The key difference in practice is token efficiency. Running the full Artificial Analysis Intelligence Index costs $4,811 for Claude Opus 4.7 vs $3,357 for GPT-5.5. GPT-5.5 completes the same tasks with fewer tokens, making it 30% cheaper in real-world operation despite its higher per-token price.

Reasoning & Math

On HLE (Hard Latent Evaluation), Claude Opus 4.7 scores 46.9% vs GPT-5.5’s 41.4%. Both approach perfect scores on AIME 2025 math competition, with differences within statistical noise. For everyday reasoning — logical analysis, plan evaluation, multi-step derivation — the user experience gap between these two models is negligible.

Long Context

This is where GPT-5.5 pulls away. In MRCR @ 1M (critical information retrieval within 1M tokens), GPT-5.5 achieves 74% vs Claude Opus 4.7’s 32.2%. If you need the model to ingest an entire technical document, large codebase, or dataset and locate specific information, GPT-5.5’s advantage is decisive.

Speed & Latency

OpenAI claims GPT-5.5 matches GPT-5.4 latency while delivering “higher intelligence.” Community feedback: time-to-first-token is similar to GPT-5.4, but streaming output is faster. Claude Opus 4.7 lacks a “fast mode,” making it feel slower in iterative workflows. Gemini 2.5 Pro sits in the middle on latency — but at its price point, this is perfectly acceptable.

Real-World Cost

| Model | Input ($/MTok) | Output ($/MTok) | Full AA Index Cost |

|---|---|---|---|

| GPT-5.5 | $5 | $30 | $3,357 |

| Claude Opus 4.7 | $5 | $25 | $4,811 |

| Gemini 2.5 Pro | $1.25 | $10 | $861 |

GPT-5.5’s list price is the highest — output tokens cost 1.2x Opus 4.7 and 3x Gemini 2.5 Pro. But after correcting for token efficiency, GPT-5.5’s actual task cost sits between Opus 4.7 and Gemini 2.5 Pro. For everyday tasks that don’t require frontier-level intelligence, Gemini 2.5 Pro’s cost advantage is crushing.

Recommendations

Individual developers / students: Gemini 2.5 Pro. A fraction of the price with capabilities sufficient for most programming, writing, and analysis tasks.

Enterprise agentic workflows: GPT-5.5. Fewer retries, stronger long context, lower actual operating costs — advantages that scale with deployment size.

Large-scale codebase maintenance: Claude Opus 4.7. Its SWE-bench Pro lead isn’t accidental — it retains a subtle edge in understanding complex code dependencies and generating correct patches. Note GitHub Copilot’s multiplier pricing: Opus 4.7 is 3.6x, making actual usage costs significantly higher.

Hybrid strategy: Use GPT-5.5 for complex reasoning and critical code paths, Gemini 2.5 Pro for batch simple tasks — you can cut costs by 50%+.